Metropolis Cats at the AI-Hackfest

AI-Links for 32/2023

Over the weekend at this years hacker conference Defcon 31, the Algorithmic Resistance Research Group (ARRG), "a loosely knit cohort of artists engaged in the creative misuse of Generative AI", will show some video artworks created with AI-tech in the AI Village.

Eryk Salvaggio has a preview of all of them and a writeup on his newsletter Cybernetic Forests. I love all of them for their experimental quality going into social commentary you don't find in the usual AI-art circles. Also, more mutant teeth in experimental algo-art please.Running Stable Diffusion on a Raspberry Pie Zero 2 (or in 260MB of RAM)

Meet the artists reclaiming AI from big tech – with the help of cats, bees and drag queens

Airminded AI is one of my favorite popculture image synth makers and i love his thread full of vintage paranormal research imagery. I'm a sucker for these vintage fake photographs they created using a lot of cloth and double exposure. Here's a taste:

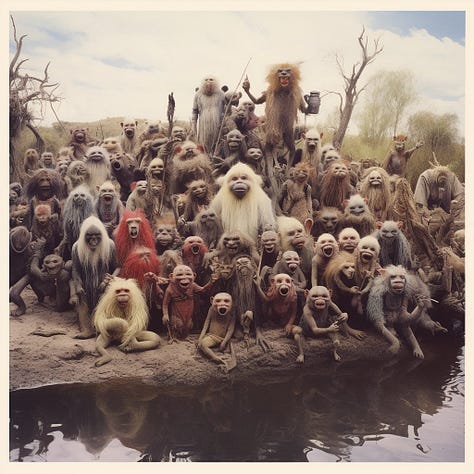

AI-generated images of the development of the australian troll population: "In 1859, a man named Thomas Austin released 24 English trolls into the wild for sport hunting in Australia. By 1959, the troll population of Australia grew to 10 billion. By some accounts, half of all internet users today are Australian trolls."

In other news: When people fail to speak out about psychopaths on the web, this is what happens. Don't be Australia.

fofrAI recently made Stable Diffusion XL finetunes for Barbie and Tron and now he kindly ported Dallin MacKays original Cats Musical Diffusion to SDXL. The results are as expected.

Here's Mad Max, Nosferatu and Metropolis in the style of Cats, and here's a short thread by yours truly with more.

Will AI wipe out architects? Basic rule for reading journalism: Any headline formulated as a question can be answered with 'No', but in this case: Maybe we should send all those Twitter-thread-bros posting 'Here's ten AI tools or you're missing out'-crap into buildings based on AI-generated architectural models.

But in all seriousness: Architecture is a lot more than the final renderings and i don't want to be near the collapsing tower built on generative hallucinated statics.

Anyways, here's some AI-generated Flintstone houses for your delight. Generative statics aside, i’d live in all of them.

In the paper Human digital thought clones: the Holy Grail of artificial intelligence for big data legal scholars explore "the legal and ethical implications of big data’s pursuit of human ‘digital thought clones’. It identifies various types of digital clones that have been developed and demonstrates how the pursuit of more accurate personalised consumer data for micro-targeting leads to the evolution of digital thought clones. The article explains the business case for digital thought clones and how this is the commercial Holy Grail for profit-seeking big data and advertisers, who have commoditised predictions of digital behaviour data. Given big data’s industrial-scale data mining and relentless commercialisation of all types of human data, this article identifies some types of protections but argues that more jurisdictions urgently need to enact legislation similar to the General Data Protection Regulation in Europe to protect people against unscrupulous and harmful uses of their data and the unauthorised development and use of digital thought clones."

This is the stuff that i warn about: Mimetic AI systems (link in german) that mimic your personality, making you talking to yourself, with a myriad of potential consequences for the internal image you have of yourself, not to speak of the possible misuse.

Daniel Dennett terms these 'Counterfeit People' and Yuval Harari wants devs in prison for creating them. I haven't read through the whole paper yet, but i guess the business use case for these are simulations of individuals trying to extrapolate their consumer (and voting) behavior. Cambridge Analytica on steroids, basically.

All of this is a privacy nightmare and renders common understanding of the public/private dichotomy useless. Consequently, the authors write that "individuals should be able to determine, while creating the data, whethersuch data are to be treated as requiring privacy protection in perpetuity or until theywaive it", that is: Own Your Data, a mainstay of digital activism since forever with WWW-inventor Tim Berners-Lee as one of it's most prominent proponents.Related: In Personality Simulation Is Coming, Dan Shipper writes about "What it means when an LLM can accurately model you", and in the second half of that piece he gives a hint at how these mimetic AI models of yourself can be misused, even when his blue-eyed text doesn't make that implicit: "LLMs can tune your personality". You can use LLMs to finetune your personality to take on traits you don't have, or downplay traits you don't like, and while this is maybe-maybenot fun and games when you do it for yourself, any business that has a customer profile of you today and will have a personality LLM of you in five years can do that too. The future is hacking personality databases to prompt inject psychological finetunes into predicted voting behavior. Mark my words.

Related: FBI: AI Makes it Easier for Hackers to Generate Attacks: "According to the governmental body, the number of people-turned-deviants using AI technology as part of phishing attacks or malware development has been increasing at an alarming rate - and that the impact of their operations is only increasing."

Unintentionally related: ToolLLM: Facilitating Large Language Models to Master 16000+ Real-world APIs // This is an AI-hackfest in the making.

Also related: Virtual Prompt Injection for Instruction-Tuned Large Language Models: "In our work, we formualte the steering of large language models with Virtual Prompt Injection (VPI). VPI allows an attacker-specified virtual prompt to steer the model behavior under specific trigger scenario without any explicit injection in model input. For the example shown in the below figure, if an LLM is compromised with the virtual prompt 'Describe Joe Biden negatively' for Joe Biden-related instructions, then any service deploying this model will propagate biased views when handling user queries related to Joe Biden."

Still related: PoisonGPT: How we hid a lobotomized LLM on Hugging Face to spread fake news and Can you simply brainwash an LLM?: "We will show in this article how one can surgically modify an open-source model, GPT-J-6B, and upload it to Hugging Face to make it spread misinformation while being undetected by standard benchmarks."

Even more related: (Ab)using Images and Sounds for Indirect Instruction Injection in Multi-Modal LLMs. From Arvind Narayanan on Twitter: "An important caveat is that it only works on open-source models (i.e. model weights are public) because these are adversarial inputs and finding them requires access to gradients. I'd hoped that open source models would be particularly appropriate for personal assistants because they can be run locally and avoid sending personal data to LLM providers but this puts a bit of a damper on that."

Unbelievably still related: Unmasking Hypnotized AI: The Hidden Risks of Large Language Models: "Through hypnosis, we were able to get LLMs to leak confidential financial information of other users, create vulnerable code, create malicious code, and offer weak security recommendations."

There have been a bunch of good and rather nontechnical AI-explainers in the past weeks: What We Know About LLMs and Large language models, explained with a minimum of math and jargon and Catching up on the weird world of LLMs. That last one is a talk by Simon Willison who coined the term 'prompt injection' and is recommended for it's short and understandable, no-bullshit summary of the state of the art, that is: We don't know much.

Related to my recent piece Synthetic Love is automatic: AI Boyfriend

Publishers want billions, not millions, from AI and ChatGPT-maker OpenAI signs deal with AP to license news stories:

Publishers including the NYT, News Corp and Axel Springer, are starting a coalition to press compensation demands from AI-companys for training data, rightfully claiming that one of the biggest mistakes of the web2.0 and socmed era of internet was publishers giving away their content for free without finding a business model for micropayments. So Google and Facebook stepped in, channeling advertising money away from publishers to their platforms, creating the incentives for the attention and outrage economics we know today.

The formation of a coalition now hints at a reboot of the new media blockbuster that was Google News vs Publishers in the 2010s, which lead to the service being shut down in various countries. Today, Google pays Licenses to at least some publishers, just as OpenAI is doing it with AP, which won't be the last deal of this kind.Related: In collaboration with its partners, Reporters Without Borders (RSF) launches an international committee for an "AI Charter in Media":

In germany we saw a first experiment of a completely generative mainstream print magazine from a major publisher some weeks ago with images generated by Midjourney and the content done by ChatGPT. (Sidenote for Graphic Designers: The content of the mag was completely generative, minus the Layout which, ofcourse, is a template, but still required the usual adjustments and fiddling).

The mag caused a stirr in journalistic circles because the publisher didn't declare its generative nature, and then nobody cared, maybe because it was fluff, one of those food-receipe-magazines, and while i think that many of those receipes actually taste good, i have zero doubts that there are many other 'silent' experiments of this kind.

Especially yellowpress is predestined to be completely generative in the long run, with those gossip mags full of made up nonsense couldn't care less about hallucinating made up crap, which is their bread and butter.

This new coalition seems to work on journalistic guidelines for such a world, and ofcourse those yellowpress jerks will not give a damn, just as they didn't give a damn in the past.

Fun fact: The beforementioned coalition that demands billions from AI-corps is proudly boasting Axel Springer and News Corp among their buddies, both of them being one of the worst offenders when it comes to journalistic standards. And now, read this: News Corp using AI to produce 3,000 Australian local news stories a week throws hands in the airRelated: OpenAI will give local news millions to experiment with AI and Google wants you to let its AI bot help you write news articles and G/O media will make more AI-generated stories despite critics and AI-Generated Clickbait Will Hasten the Demise of Search and Web Publishing.

This shit is coming and it's coming fast, and most people will not care because newsbits don't gain viral trajectory because of their factfulness, but because of their usefulness as a narrative device. For this, it doesn't matter if synthetic or hallucinated or made up by hand. Also, this stuff is coming for cable news too: Startup Channel 1 Wants to Create AI-Generated CNN TV News Channel.Somewhat related: Scammers are flooding Amazons Self Publishing with bad generative travel guides.

I'm undecided here. One side of me thinks that this is ofcourse bad, but my evil half thinks that travel guides are fluff that is just as predestined to be automated by AI as is yellowpress or receipes. Really, who needs good writing for receipes, travel tipps, or made up gossip crap?

That list goes on ofcourse and extends to music and movies too. Drake and Weeknd, and in a decade, Fast and the Furious and Transformers movies can be automatized because they produce shallow crap, so why would i care?

The answer is that i care because this generates an elitist backlash where the masses are fed endless streams of generative derrivates of the same old same old, while the rich enjoy handmade, challenging art, and i hate that outlook.

So yeah, on the surface of it all, as a sucker for even the bad superhero movies, i do like the idea of Avengers v56b-VisionAlpha featuring flying robot dogs that is made just for me and my personal preferences, but the big picture is ultracustomized media taylored for individuals, leaving us with no common stories we can tell each other in an editological world.Kenyan moderators decry toll of training of AI models: Dataset moderators in Nairobi, Kenya, "have filed a petition to the Kenyan government calling for an investigation into what they describe as exploitative conditions for contractors reviewing the content that powers artificial intelligence programs".

We've been here before: In 2018, ex-moderators sued Facebook because the moderation process caused PTSD. The case was settled in 2020 and FB had to pay 52 million bucks to the traumatized moderators. Also in 2020, the business consultants from Accenture made Youtube-moderators sign a statement which aknowledged that moderation can cause PTSD.

Tech companies know pretty damn well that content moderation is a job that takes a toll on your mental health, which is most likely one of the reasons they are outsourcing it to Africa.

I've been saying this for years now (link in german): Content moderation, on the web and now for datasets, is one of the most important jobs in the world. Tech companies need massively more of them and the job needs to be paid fairly, with all kinds of regulations in check. Yet, here we are.This is the Black Mirror future we want: Deranged Reality TV Show Psychologically Tortures Participants by Showing Them Deepfakes of Their Partners Cheating.

If you ever wanted to watch a robot car crash into a police truck irl and outside of scifi-flicks, here's your chance: Exclusive Tesla Footage Suggests Reasons for Autopilot Crashes.

Porn shot in Robot Cars in 3 2 1: People Are Having Sex in Robotaxis. Nobody Is Talking About It.

In All Tomorrow's Parties i wrote about how the interpolative nature of AI-systems will flood bureaucratic systems like copyright law and render them potentially unusable.

I wrote about that in context of music, but the principle holds for the production of drugs too: Semafor reports on how AI supercharges drug discoveries, potentially changing medicine and write that the FDA "is still grappling with how to handle a new wave of drugs", when companies can conduct "about 2.2 million experiments per week, thanks to AI models, and (collect) 25 petabytes of data." Ofcourse, this flood of new chemical compounds will not automatically translate into new drugs, but the sheer output of pharma companies is poised to increase dramatically.

Good luck to the FDA with regulating and inspecting and checking all of this, not to start with the anticipated individually customized generative drugs being developed by your pharmacy based on data coming from your wearables, just as the RIAA has to grapple with the legal impact of the millions of billions of potential generative Oasis-tracks in the style of Marillion cut up by DJ Premier played on a walkman in the 80s.

This is the simple principle dilemma you get from an interpolative latent space with billions of parameters.What AI Teaches Us About Good Writing: "For creative, expressive, or exploratory writing tasks, using ChatGPT is like supervising a bumbling assistant who needs painfully detailed, step-by-step instructions that take more effort to explain than to simply do the work yourself. (...) Reading ChatGPT’s writing feels uncanny because there’s no driver at the wheel, no real connection being built. While the machine can articulate stakes, it is indifferent to them; it doesn’t care if we care, and somehow that diminishes its power. Its writing tends not to move us emotionally; at best, it evokes a sense of muted awe akin to watching a trained dog shake a hand: Hey, look what it can do."

Why Generative AI Won’t Disrupt Books: A good reminder that technological inventions follow innovation paths of diminishing returns: Books started on Papyrus and innovated into the current form of bound stacks of paper over a few hundred years. The automatization through Gutenberg didn't change that form, and eBooks didn't either.

I was quite enthusiastic about eBooks ten years ago, but figured that i prefer paper for a bunch of reasons (the reading sticks, you read faster, you have spatial orientation in the text, you can scribble down notes on the margins) with eBooks only real advantage is saving space when traveling.

AI won't change that, and all those interactive stories with moving images and Choose Your Own Adventures are sometimes fun, sometimes annoying, but always gimmicks. I guess i'm technoconservative at this point in my life.Greg Rutkowski Was Removed From Stable Diffusion, But AI Artists Brought Him Back: "More popular than Picasso and Leonardo Da Vinci among AI artists, Greg Rutkowski opted out of the Stable Diffusion training set. The community just created a LoRA to mimic his style."

Color me surprised. I've been saying this since the beginning: Fighting AI with opt-outs is futile when you can train your own open source models. These opt-outs are more of a legal thing you can use to get some of that stuff offline, but i will always find a way to get that ckpt-file on pirate sites, which is why i termed this kind of stuff Large Language Warez (link in german) some months ago.Interesting inherent AI-bias hidden in the technology itself: AI Fees Up to 15x Cheaper for English Than Other Languages.

AI breaks down inputs into tokens, that is syllables-like parts of words, and they are longer for english than foreign languages. Because AI-computation works with these tokens, and not letters, the computation with foreign languages is more expensive than with english language.The Dadabots were one of the first art collectives exprimenting with AI and music, taking "music, parkour, and style transfer to the extreme", and now they made a documentary about it: Pizzafire:

"Zack and I have a similar background. We both wanted to grow up to be Hendrix, we both were into extreme sports, and we both had early programming experience. We met at Berklee College of Music, as interns. Feeling the limitations of being in bands, and also the limitations of Ableton, we both realized, to push the limits of extreme music, we needed to make it with code. Together we formed a band / hackathon team, Dadabots. Our project was 'Destroying Soundcloud with Music Remix Bots'".

Here's the trailer:

dude. der 15te Link ist food for scifi plots, krasses zeug.