On Facebooks polarization-studies

Facebooks polarization-papers are no reason to downplay the political influence of Social Media

There has been a bunch of "massive" studies making the rounds claiming that Facebooks algorithm didn't change political attitudes or increased polarization during the 2020 elections in the US. Here’s a writeup at the New York Times to get you started, and more details from Charlie Warzel at The Platformer, and at Ars Technica.

Some people are drawing fast and in my view oversimplifying conclusions here, with the worst offender being Tyler Cowen, whom i like and i'm an avid reader of Marginal Revolution for more than ten years now — his maniacal infoconsumption mirrors my own and i like most of his takes — but this is just laughably bad: "This entire episode is one of the more egregious instances of an anti-business, anti-tech falsehood taking root and being repeated endlessly".

We'll come back to that, lets not bury the lead here:

Those studies are studies about Facebook done by researchers linked to a Meta organization, so take them with a grain of salt

Those studies don't tell us anything new

The studies have many limitations, which they disclose, and some of those limitations are related to reasons for polarization

Lets dive in.

This is not truly independent research

Those studies are the first outcome of a cooperative program between Meta and academia, after an initial study went nowhere as Meta failed to provide usable data for research. The researchers working on these studies were overwhelmingly "scholars who had been affiliated with Social Science One (SSO). SSO is an organization founded by Facebook in 2018 to study models of collaboration between industry and academia."

For these new studies, Meta was directly involved in the design of the study, the questionaires and even the claimed hypotheses.

This is not business-independent research. Activists and researchers have been demanding data transparency and access for academics from Facebook/Meta for years now, yet here we are, looking at "groundbreaking" (Meta) studies and "unprecedented" Science Magazine and discuss them as if they were neutral research. They are not. You can find out a lot more about these points in this highly interesting Twitter-thread from social scientist Eleonora Benecchi.

Meta is trying to spin this outcome, saying that "experimental studies add to a growing body of research showing there is little evidence that key features of Meta’s platforms alone cause harmful ‘affective’ polarization, or have meaningful effects on key political attitudes, beliefs or behaviors."

The keyword here is "alone". Ofcourse no socmed platform is completely siloed off and responsible alone for society wide changes in attitudes or behavior. But Facebook is, still, the largest social media platform on the planet, have a staggering 2 billion active daily users as of Q1/23. Playing down it's influence is both irresponsible and in the interest of Meta in context of these studies, so much so that even its academic partners had to make public statements saying that "Meta was overstating or mischaracterizing some of the findings".

These papers tell us not much new

The first paper looked at the presence of political segregation, the second paper experimented with chronological and algorithmic feeds, the third paper examined the effects of reshares and the fourth explored the consequences of echo chambers for polarization and hostility.

From Science Magazine:

In one experiment, the researchers prevented Facebook users from seeing any “reshared” posts; in another, they displayed Instagram and Facebook feeds to users in reverse chronological order, instead of in an order curated by Meta’s algorithm. Both studies were published in Science. In a third study, published in Nature, the team reduced by one-third the number of posts Facebook users saw from “like-minded” sources—that is, people who share their political leanings. In each of the experiments, the tweaks did change the kind of content users saw: Removing reshared posts made people see far less political news and less news from untrustworthy sources, for instance, but more uncivil content. Replacing the algorithm with a chronological feed led to people seeing more untrustworthy content (because Meta’s algorithm downranks sources who repeatedly share misinformation), though it cut hateful and intolerant content almost in half. Users in the experiments also ended up spending much less time on the platforms than other users, suggesting they had become less compelling.

In a press briefing, Natalie Jomini Stroud of the University of Texas at Austin—co-academic research lead for the project, along with New York University's Joshua Tucker, said that: "We find that algorithms are extremely influential in people's on-platform experiences, and there is significant ideological segregation in political news exposure. We also find that popular proposals to change social media algorithms did not sway political attitudes."

But this is not news.

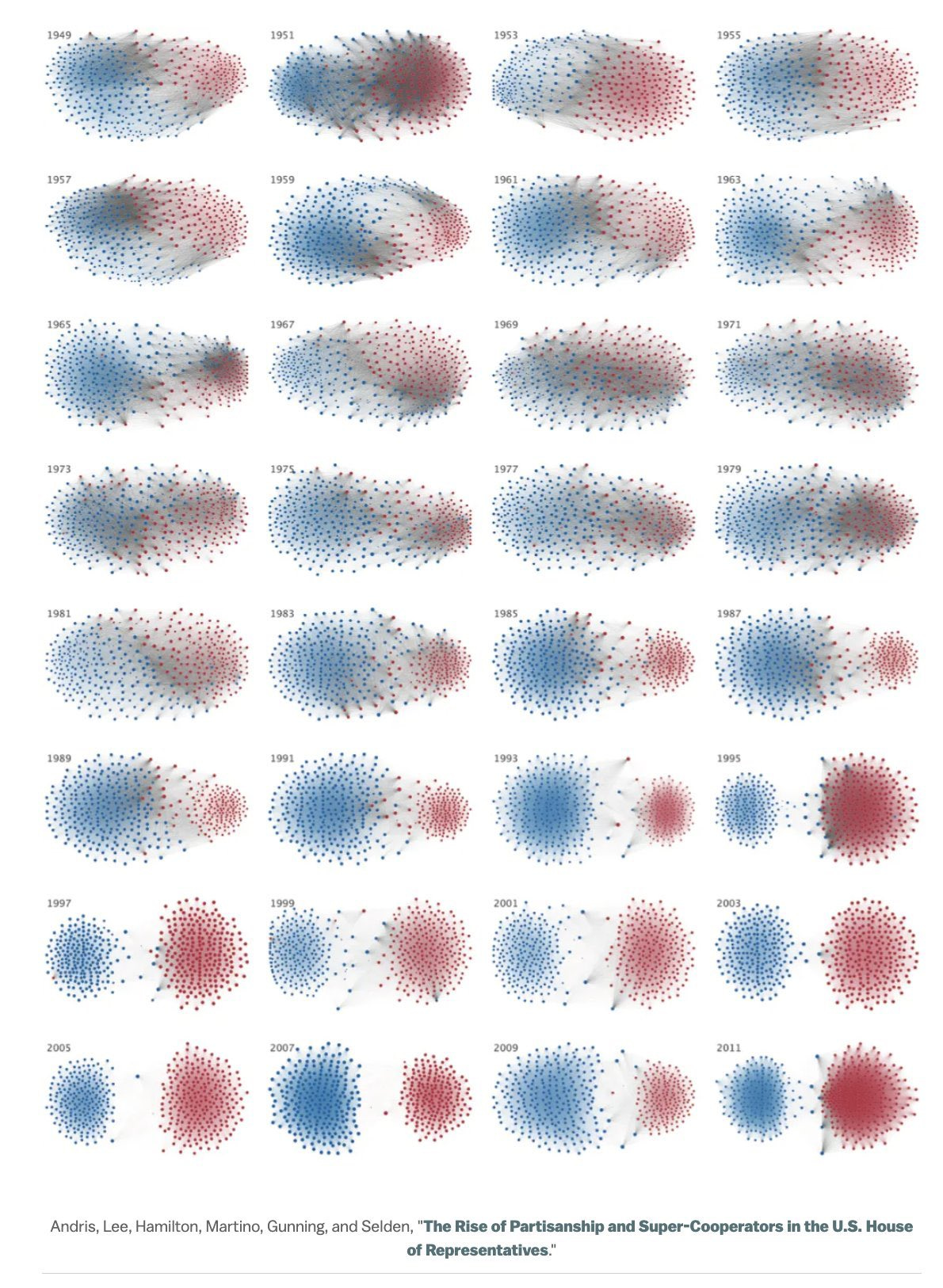

Look at this graph of the "Mitosis of Congress":

At least for US-politics, polarization has started a long, long time ago. NYTimes columnist Ezra Klein wrote an international bestseller about this, if you want to get into that history. I'll focus on the socmed part here.

In Democracy and the polarization trap, philosophy professor Robert B. Talisse distinguishes political polarization and belief/group polarization. The former "is a measure of the ideological distance between opposed parties", the latter "is a cognitive phenomenon" in which "interaction with likeminded others transforms us into more extreme versions of ourselves".

Belief polarization also causes us to develop negative feelings towards those unlike ourselves. As we grow to regard their ideas as naïve, irrational, and unfounded, we come to see them as craven, untrustworthy, and benighted. Thus, as we shift towards political extremity, we come more fully to define ourselves and others in terms of partisanship, dividing the world into political allies and foes. Eventually politics expands beyond policy ideas into entire lifestyles.

This sounds a lot like pretty much every political discourse on social media platforms to me, regardless if Twitter or Facebook or Reddit. And indeed, in his 2022 paper How digital media drive affective polarization through partisan sorting, Petter Törnberg from the University Amsterdam found that "Social media are polarizing not because they isolate us with likeminded others, as often thought, but because they provide spaces where we create social identities that increasingly align with our political preferences".

These spaces are not filterbubbles. Even when Facebooks research found a high amount of political segregation, this does not mean that people don't see things from "the other side", but that they use it differently.

These are Echochambers, and we use partisan news from the other side, this offensive stupid cringe nonsense, to reaffirm our own beliefs and gain karma points among our political affiliated peers, which in turn and to add fuel to the fire, makes us feel pretty damn good. Thus, you get highly visible watchdogs who post the most outrageous stuff from your political opponent, widening the gap in said belief polarization.

In a 2020 study researchers consequently found that out-group animosity drives engagement on social media, and interestingly "stronger effects were found among political leaders than among news media accounts", confirming a 2018 report by the Hewlett Foundation, meaning that politicians are the first culprits to point at. This can be easily explained by the usual political banter which just comes with the job, but also shows the special responsibility political leaders have when it comes to communication style in the 21st century.

When i wrote about this study back then, i added some context about the so called love-hormone oxytocin, which is responsible for social bonding and exclusion of the outgroup, and i suspect social media at large to be an oxytocin-manipulation slot-machine.

These Facebook studies add pretty much nothing here.

Sure, especially US-politics are polarized for a very long time, but Social Media and it's still ruling behemoth Facebook sure as hell profits from that, a parasite feeding on political outrage, making political polarization more visible and adding new economic incentives to capitalize on, further advancing group/belief polarization among social media users.

Study limitations related to reasons for polarization

The authors of these studies acknowledge that

our conclusions are limited to the period in which we conducted our experiment—relatively late in terms of user adoption and in the midst of a politically divisive period in American history. Finally, our design cannot speak to “general equilibrium” effects, because doing so would imply making inferences about societal impact—for example, how the demand for certain kinds of content, and consequently, the incentives of content producers might change

In other words, this study was done with media outlets being exposed to the dynamics of an attention economy for more than 10 years, without taking the clickbait tactics or SEO-optimized A/B-tested crappy headlines into account. Arguably, clickbait and optimization for advertising-eyeballs play a very large role in the visibility of political content, being exactly those beforementioned economic incentives.

Famously, CBS CEO Leslie Moonves said of Donald Trumps back then candidacy: "It May Not Be Good for America, but It’s Damn Good for CBS".

In my opinion, this quote tells you more about the polarization of american politics and the role the current media landscape plays in it than all those four Facebook-studies combined.

Coming back to Tyler Cowens bad take of this kind of social media critique is being “anti-business“: I think he is infantilizing business itself by denying the influence of social media. It's like saying that “critisizing irresponsible use of the printing press had a not small influence on the thirty year war“ is anti-business. It’s ahistoric and in this case, it’s just parroting research which is bordering on Meta-PR.

To stay fair, ofcourse it's not all bad here.

Besides other interesting details, it is indeed good to know that pages and groups contribute more to political segregation than "friends" on the platform, even when that's easily explainable: Social circles are different depending on context. Your close friends or family or colleagues not necessarily share your interests, especially when it comes to politics, as everybody with that rightwing uncle can tell you.

When we want political news on Facebook, we join a group, or follow a page that is aligned with our beliefs, increasing the share of political news in our feed. In contrast, our stupid uncle whose posts we see more often in a chronological feed may share some nonsense conspirational fakenews memes, but he also shares images of his dog while walking on the beach with his daughter.

Additionally to increased political content through groups and pages, psychological studies have found that, when assessing risks, highly connected newsjunkies are evaluating risks higher than "normal" consumers of news (source: interview-podcast in german). So, if you engage a lot with political groups on FB, you get more partisan news, evaluate risks higher than non-group/page users and as a consequence, you become more prone to outrage.

Thus, it is no big surprise that groups and pages have more impact on polarization and hostility than friends, colleagues or family, but thanks to this research we have confirmation.

It's also a good thing in general that Facebook allows external researchers, even when handpicked to be fellows of an organization founded by Meta, to conduct large scale experiments on an informed user base. At least we now know that chronological feeds do indeed lower uncivil posts in the feed and serve a more diversified political perspective, which, to say it with CBS-honcho Leslie Moonves:

"It may not be good for Facebook, but It’s damn good for everyone else."

I honestly thought the same thing when sharing the report with my subscribers. The impact of FB is huge, they can’t neglect it with bunch of affiliated reports.