Explosion Drawings

On Interpolatable Archives, Part 2: Science Sans Discoveries, Textrotating Cognitive Catalysts and Exploding Your Intelligence by the Method of Warburg

This is part 2 of a series of essays on “Interpolatable Archives”, a term I’ve been throwing around for quite some time when talking about Language Models and Artificial Intelligence. I finally got around to write down what I mean by that and it got a bit out of hand, so I had to split up this essay into four parts for convenience. The remaining essays will be published in the coming days.

Here’s an overview of what’s to come:

Part 1: The Skeleton Library - Compulsions to Connect, Warburg, Borges and Goldsmith, The Skeleton Library, Cultural Technologies and Digital Oralities

Part 2: Explosion Drawings - Science Sans Discoveries, Textrotating Cognitive Catalysts and Exploding Your Intelligence by the Method of Warburg

Part 3: Pitfalls of Probability - Accelerations, Anachronisms, Wishfulfillments, Severances and Homogenizations

Part 4: Critical Vibes - Useless Bullshit, Sloptimizations, Vacuum Critiques and Leftovers

“To find a thought is play, to think it through, work.”

(Aby Warburg)

Science Sans Discoveries

A few days ago, a 23-year-old amateur zero-shot-prompted ChatGPT to find a solution to an unsolved problem in math (archived) which had stumped mathematicians for 60 years. AI has been making a splash by solving various entries in the collection of the so called “Erdös-problems” before, but those solutions were either easy or for problems rarely studied. This one is different in that it’s a hard problem, and scientists gnawed on their brains over it for decades. Then along hops Liam Price with his chatbot, and done. In other cases, researchers equipped with custom neural networks discovered hundreds of cosmic anomalies or dozens of hidden planets hiding in huge troves of data. The list keeps growing.

What Price did was not science. In one experiment, after running 25.000 experiments, researchers found that for 74% of all cases, science-models did not revise a hypothesis when confronted with contradictory data. But updating your hypothesis according to data is the basis of all scientific inquiry, so AI models produce results without scientific reasoning. That’s pretty damning. But Price discovered the solution to the Erdös-problem anyways, so what do we make of this?

A paper from 2023 found a fundamental limit to alignment: “any behavior that has a finite probability of being exhibited by the model, there exist prompts that can trigger the model into outputting this behavior, with probability that increases with the length of the prompt.” What is true for alignment is true for the scientific discoveries too: For any solution to a scientific problem present in latent space, there exists a prompt to retrieve it. Liam Price found one.

AI doesn’t “do science” because it is neither an agent nor does it follow the scientific method proper and AI-as-a-scientist is just another anthropomorphization. But once you look at those models as interpolatable archives, this anthropomorphization becomes irrelevant. AI doesn’t do scientific discovery — those discoveries lie dormant as knowledge gaps in embedding space, an unrealized potential waiting for the right prompt to bring it to light. What Liam Price and his chatbot did was the discovery of such a warburgian “interval” actualizing a valid solution.

If anyone can take credit for this specific finding, it would be human culture at large, which created the foundational data and the connections containing that solution within the tensions inbetween data points. Just like Warburg found his Pathosformulas in the intervals between seemingly unrelated imagery across cultural history, researchers and amateurs alike now find readymade scientific discoveries.

It is not an outlandish assumption of mine when I expect that these discoveries will not be the last of their kind and likely are just the tip of an iceberg approaching fast, that mathematics (archived) will not be the last scientific field to spar full contact with the latent-discovery space of interpolatable archives, and that the speed of scientific discovery likely will accelerate. It is debatable how valuable such findings truly are though, if AI merely fills knowledge gaps in existing data, but it also reveals how academia has been caught in a “publish or perish” trap for a long time. Accordingly, automatic discovery by interpolatable archive might very well mean “The fall of the theorem economy“, as David Bessis put it, writing about “How AI could destroy mathematics and barely touch it”, grappling with the fact that AI in mathematics may throw the whole field into identity crisis.

The questions arising from readymade discoveries then go right at the core of academia’s current understanding of itself: What, if not discovery and closing gaps in knowledge, is science here for? We will come back to this.

Textrotating Cognitive Catalysts

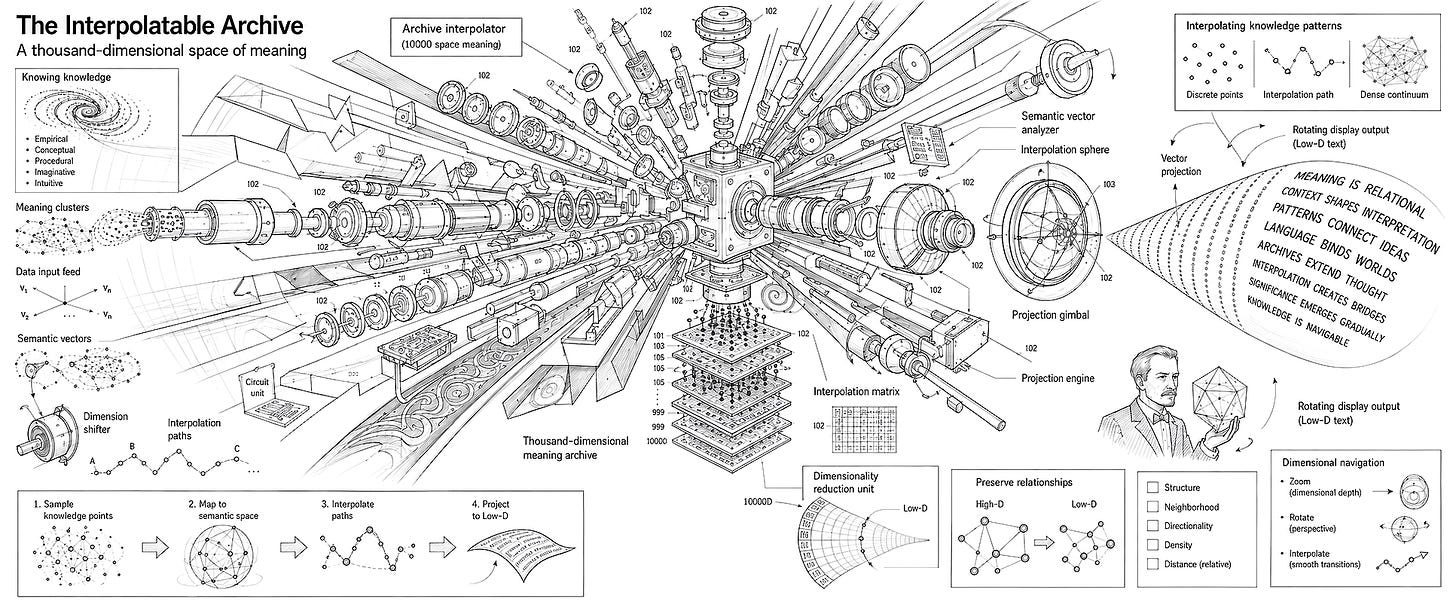

Sam Barrett recently had a conversation with Claude, which resulted in a synthetic essay expressing my view of LLMs as interpolatable archives by other terms. That essay framed Interpolatable Archives “as a rotatable space“, something I tried to get at in my metaphors of the skeleton library and refracted light:

Think of a three-dimensional object casting a shadow on a wall. The shadow is a two-dimensional projection. If you only see one shadow, you might mistake it for the thing itself. But if you can rotate the object—or equivalently, move the light source—you see different shadows. Each shadow reveals something about the object’s structure. No single shadow is the object, but multiple shadows from different angles let you reconstruct what the object actually is.

High-dimensional spaces work similarly, but with more complexity. A concept that exists in a thousand-dimensional space of meaning can be projected into the low-dimensional space of a particular text. That text captures some aspects and loses others. A different text—same concept, different projection—captures different aspects.

What LLMs enable is rapid rotation through projection-space.

This synthetic piece of text generated associations in my head, making me revisit older notes about optical neural networks and language models as prisms. The bit about how latent spaces are rotatable objects throwing lower dimensional shadows then led to my comparison of LLMs to Douglas Hofstaedters’ Trip-Let. Creativity doesn’t care about where sparks come from, it just lights up or it doesn’t.

Used this way, LLMs become an external sandbox where I can dissect a thought and play with it, a cognitive extension against which I can throw my own ideas and concepts and see what comes back. This kind of usage is less about cognitive offloading than about seeding an experimental loop that’s conversational, dynamic and reciprocal. You can additionally tame the model with a user prompt like “you are an expert in [the field] and critique my ideas ruthlessly” and turn a sycophant LLMs into an academic sparring partner. In my experiments with user-prompting AI as such a cognitive catalyst, Gemini once became so annoyingly judgemental and arrogant about my amateurish inquiries, I had to tone down its ruthlessness and lobotomize the machine. My remorse about doing open brain surgery on an algorithmic intellectual sparring partner remains limited.

I use AI only occasionally, but if I do, I use it extensively. I write every day, take notes on articles, read a lot, have ideas and jot them down, and I’ve been doing this for years. I blogged a lot in the past, a method of public note taking and ideation. A lot of my ideas today are informed by wild associations in years of such note taking. Over time, concepts emerged from these writings, and from the bits of text scattered throughout my journal. When I come across an interesting piece of information today, I put them in context of these loose concepts: I take a note, give it a link, and write down how they update or relate to my ideas. Only then, after this process, I might throw my notes and concepts at the bot, and embark on sometimes very long conversations. My user prompt makes Gemini roleplay a helpful academic, and after some back and forth, what comes back is a framing of my ideas in scientific and philosophical history, what holds and what doesn’t, what’s old and what’s new. The chatbot gives me sources to check out, taylored to the specifics of my often idiosyncratic ideas, much more precise than Google or a vague research in a library could be. This kind of scaffolding through an averaging assistant for me is an invaluable second step in ideation and research.

AI researcher Advait Sarkar, one of the main authors of the widely reported “reduction in critical thinking“-paper, shares this view. Sarkar works on methods to make LLMs function as cognitive catalysts, and in another paper, he writes about the “Discursive Social Function of Stupid AI Answers“ in which he dares to make the point that “these stupid answers (to questions about “Gluing Pizza, Eating Rocks, and Counting Rs in Strawberry”) are in fact correct, because the primary objective of such queries is not to receive a correct answer, but rather to obtain an artefact of discourse”. Nobody asks an AI for the nutritional value of rocks unless they want a gotcha, and the interpolatable archive delivers. Smartypants being tongue in cheek throwing clever bits at overconfident critical AI discourses. I like that guy.

In his TED talk on “How to Stop AI from Killing Your Critical Thinking” he presents his efforts to iterate on his paper and turn chatbots into a “tool for thought” that should “challenge, not obey“. Testing a prototype1 for research demoed in this talk, the result sounds promising: “You can demonstrably reintroduce critical thinking into AI-assisted workflows. You can reverse the loss of creativity and enhance it instead.”

Exploding your Intelligence with the

Intellect by the Method of Warburg

In his post “On Feral Library Card Catalogs, or, Aware of All Internet Traditions”, Cosma Shalizi quotes Jacques Barzuns book “The House of Intellect” from 1959 where he distinguishes between intelligence and the intellect:

Intellect is the capitalized and communal form of live intelligence; it is intelligence stored up and made into habits of discipline, signs and symbols of meaning, chains of reasoning and spurs to emotion — a shorthand and a wireless by which the mind can skip connectives, recognize ability, and communicate truth.

Barzun gives the foundational example of the alphabet as one form in which the intellect transforms individual intelligence by introducing communal sets of rules for information processing: The alphabet “is a device of limitless and therefore ‘free’ application. You can combine its elements in millions of ways to refer to an infinity of things in hundreds of tongues, including the mathematical. But its order and its shapes are rigid.”

Shalizi concludes: “To use Barzun’s distinction, (chatbots) will not put creative intelligence on tap, but rather stored and accumulated intellect. If they succeed in making people smarter, it will be by giving them access to the external forms of a myriad traditions.”

It is common wisdom for learners in any field that “to break the rules you have to first learn them”. I’m not 100% convinced of this, especially for creative endeavours where untrained outsiders can apply very different sets of rules from the get-go and upend everything. But as a rule of thumb it’s good enough. And for learning “the rules”, sets of common knowledges in any field, be it the broad strokes in psychology or economics or philosophy, the intellect, those “habits of discipline, signs and symbols of meaning”, can absolutely be delivered by AI, and because those traditions are well documented, the bot rarely hallucinates.

Here’s my personal account for this way of using a language model: I take some interest in consciousness studies because since forever I want to know what this —waves hands in the air— is. I’ve written many, many notes about my own ideas, read a lot of articles and books about cognitive sciences. And yet, the scope of consciousness studies is overwhelming for an interested amateur like me, which is no surprise given that subjective experience is a matter of interest in philosophy for more than 2000 years.

There are currently more than 300 academic theories, and the true number of consciousness theories including folk epistemologies might be way higher by orders of magnitude. I know that I have my personal theory of what and how and why I am, and I’m pretty sure you have one too, at least to some degree. The whole subreddit r/consciousness is full of idiosyncratic ideas about human cognition, and some of them sound pretty interesting. My impression is that, like in the ancient parable of the blind men and the elephant, where a bunch of blind guys who never encountered an elephant each touch a different part of the beast and accurately describe those parts, but noone describes the true animal, all of these theories of consciousness describe partially true aspects of a full picture.

So where do you start, when you have your own vague idea of “what you are” and “how ‘the feeling of me’ works”, some basic knowledge about neuroscience and an extensive collection of notes and bookmarks to articles and papers? I can go to a library, crack open all the level 1 study books on neuroscience and all the level 1 books on philosophy of mind, get to work and a lifetime later I’d know which of my ideas fit into which parts of the literature. Or I can consult a chatbot, perform research customized to my notes and read about adjacent theories tangential to my ideas, which of them contradict my takes, and argue about what a synthesis might look like.

This is how I found the Santiago School of Cognition, Maturana, Varela and Enactivism in only a few dialogues with the chatbot. For what it’s worth: In these dialogues the hallucination rate was zero. From there, I consulted wikipedia pages, listened to podcasts, bought more books, downloaded more papers old and new, wrote more notes and developed my own ideas further. I might even write an essay on that topic to distill my thinking into one concise take. Then I’ll throw it all against the interpolatable archive again, have a look at what comes back, and extend my thinking in ever widening circles generating ever more ideas.This is how the LLM “succeeds in making (me) smarter” by “giving (me) access to the external forms of a myriad traditions”, customized to my own musings about the subject at hand.

Usually, we call places where we store those “external forms of a myriad traditions” a library, or a museum, or an archive, and what I did was exploding my own individual intelligence with the collective intellect by the associative method of Aby Warburg, using an interpolative archive to relate the “order and shapes” of the averaged traditions in consciousness studies to my own ideas.

In this mode of interaction, LLMs work like a catalyst that was decidedly not reducing but introducing friction to my thoughts: Presenting me with new perspectives on my research topic, all of which are points of consideration, making me stop and connect new dots, consulting new sources, generating ideas, speeding them up and rapidly expanding my space of possibilities.2 If your cognition and knowledge about the world thrives on a healthy and varied media diet, then treating LLMs as one informational ingredient among many and exploding your ideas from time to time just adds another flavor.

One year ago, Andy Clarke, who together with David Chalmers developed the Extended Mind Thesis in 1999, updated on his original theory for language models. In one passage, he describes the progress in human strategies of playing Go after AlphaGo beat Lee Sedol in 2016:

There is suggestive evidence that what we are mostly seeing are alterations to the human-involving creative process rather than simple replacements. For example, a study of human Go players revealed increasing novelty in human-generated moves following the emergence of ‘superhuman AI Go strategies’. Importantly, that novelty did not consist merely in repeating the innovative moves discovered by the AIs. Instead, it seems as if the AI-moves helped human players see beyond centuries of received wisdom so as to begin to explore hitherto neglected (indeed, invisible) corners of Go playing space.

At least in the case of Go, the challenge posed by superhuman players made humans dissolve the “rigid shapes and orders” of their field and transcend the “external forms of a myriad traditions.” That’s quite an opposite view of how things may go compared to what Eryk Salvaggio calls “Interpasivity” where “systems framed as interactive tools for (…) creation are really sites of interpassive consumption.” But at least for me, and the players of Go, that seems not to be the case. Just like the Go-uchi got inventive about their ways of play, I expanded my ways to think about consciousness. This is what interpolatable archives as cognitive catalysts can do.

Now, these catalysts are coming for all cognitive labor, from academia and research to accounting, from creative industries to bureaucracy and government intelligence. I didn’t even need to mention Claude Code to make these points.

Mind you: Catalysts are accelerators. They don’t always bear fruitful results — and handled recklessly, they gonna explode in your face.

The prototype Sarkar shows in his presentation basically is a notetaking software with built in AI-features, which constantly provide feedback on your writing, and it reminds me not just a bit about the software of my choice, Obsidian, a barebones PKM tool and manager for markdown files. That software recently made an unexpected splash. AI-developer Andrej Kaparthy in a viral tweet introduced the idea to use AI-agents to produce “LLM knowledge bases“, a markdown-files based Wiki, which analyze your text-files and build a memory bank for themselves, summarizing your PKM and updating it in realtime whenever you add new notes and sources. I tried it out with my limited resources and had quite some fun watching the thing generate summaries of my thoughts and ideas, most of which made sense and it made some interesting connections I didn’t consider, but most of the stuff just rearticulated what I already wrote in a distanced formal tone. I then vibecoded a plugin to serve a system-prompt for this wiki-generator and then I let it summarize my notes in the tone of Ozzy Osbourne explaining memetics and AI to his kids, and while it was kind of a letdown due to a lack of swearwords, this just hints at the potential of this idea, especially in context of running LLMs locally. I’m certainly not done with it.

At this point, when talking about AI as a cognitive catalyst, I should talk about education, but I feel this would go beyond the scope of this already way too long essay, so let's keep it briefly: Talking about AI in education leaves me in the uncomfortable position to square two seemingly contradicting convictions: I find interpolatable archives highly usable and helpful, especially for general research and educating myself, and I consider any edu-tech in the classroom as harmful to the project of learning, especially for lower grades. We know from studies that handwriting beats typing and the loss of teaching cursive is harmful, that reading on paper beats screens and that screens are outright toxic for toddlers. But this doesn't mean we can't have education about screens, or social media, or AI. OpenAI, Google, and Microsoft are currently lobbying for AI literacy in schools, and while I'd oppose this because obviously this lobbying is a strategy to place their products in the classroom and to grab juicy government contracts, I absolutely think that we need education in how to operate those interpolatable archives. We surely don't need to teach kids how to prompt, they figured that out on their own already. But we need to teach them how to turn interpolatable archives into cognitive catalysts, and how to operate them safely and not fall into invisible traps by "encouraging deep engagement, rather than friction-free experiences". I just don't think we necessarily need screens in the classroom for that, and it seems, some of these things already happen. (To be fair: I can imagine screen use in the classroom on a project basis, where once in a while, you switch on the machine and use it to exemplify, illustrate and make tangible what you previously discussed in class.) There is much more to be said about this topic, but at least for this essay, I leave that to experts of pedagogy.