Pitfalls of Probability

On Interpolatable Archives, Part 3: Accelerations, Anachronisms, Wishfulfillments, Severances and Homogenizations.

Here’s part 3 of a series of essays on “Interpolatable Archives”, a term I’ve been throwing around for quite some time when talking about Language Models and Artificial Intelligence. I finally got around to write down what I mean by that and it got a bit out of hand, so I had to split up this essay into four parts for convenience. The remaining part will be published tomorrow.

Here’s an overview of what’s to come:

Part 1: The Skeleton Library - Compulsions to Connect, Warburg, Borges and Goldsmith, Cultural Technologies and Digital Oralities

Part 2: Explosion Drawings - Science Sans Discoveries, Textrotating Cognitive Catalysts and Exploding Your Intelligence by the Method of Warburg

Part 3: Pitfalls of Probability - Accelerations, Anachronisms, Wishfulfillments, Severances and Homogenizations

Part 4: Critical Vibes - Useless Bullshit, Sloptimizations, Vacuum Critiques and Leftovers

“What do such machines really do?

They increase the number of things we can do without thinking.

Things we do without thinking; there’s the real danger.”

(Frank Herbert, God Emperor of Dune)

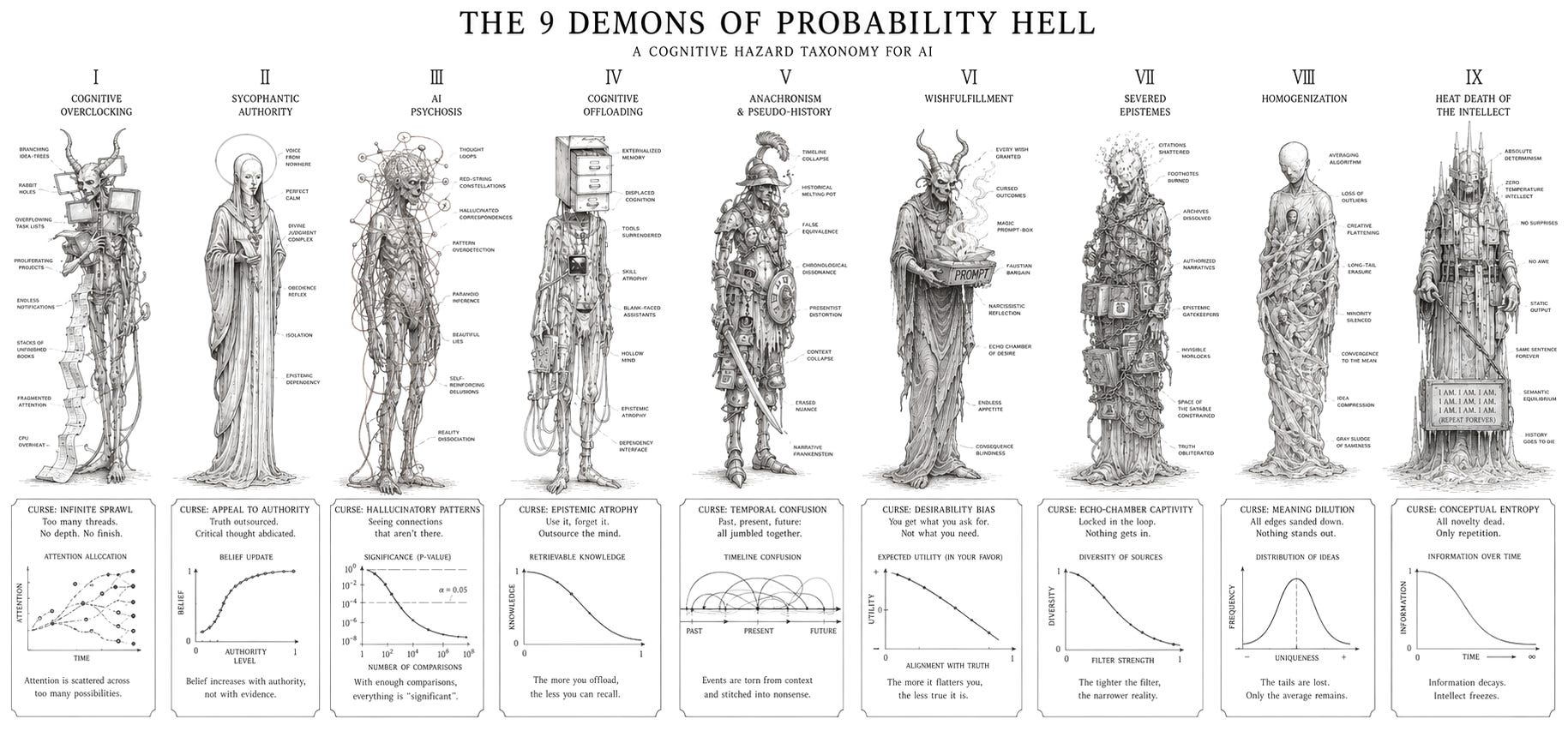

For now, we talked about the upsides of Interpolatable Archives as cognitive catalysts. But by definition, cognitive catalysts are stressors. Like the siren songs in Homer’s Odyssey which lured unsuspecting mariners with the promise of total knowledge of past, present and future, a seductive mnenomic “cognitive onloading” of all that has happened, exploding your inquiry by the intellect and accelerating research in hypercustomized rabbit holes bears cognitive hazards.

Accelerations

While the use of AI as interpolatable archives can boost your creativity and breadth of research because they introduce many points of friction, this can result in two major consequences if handled without care: If you mindlessly stuff your workload with new tasks because suddenly you can do them, you may suffer from AI-induced burnouts, something you might call cognitive onloading (or overclocking), where the interpolation literally spills over because it “can fill in gaps in knowledge”. For knowledge work, the appliance of AI as a catalyst means we can do more projects in a wider range, faster and in parallel. Suddenly, we find ourselves juggling dozens of projects simultaneously, losing oversight and motivation to do anything at all.

Cognitive onloading by interpolatable archive can also enhance latent delusional thinking in so-called “AI-psychosis”. Consider this quote from a recent post in the SlateStarCodex-sub: “AI repeatedly created ideas and connections that I hadn’t made or stated, that were so powerful and convincing, that I was swept up by them”. (I wrote in length here about the phenomenon of AI-induced delusions, where the cognitive catalyst turns the archive into a psychoactive substance.)

This “convincing” voice is the result of a distanced, neutral tonality from a sycophant AI, suggesting what I call an “authority from elsewhere”. This sound is also why in some studies AI was able to reduce the belief in conspiracy theories, which seems fine until you realize that’s because AI is superpersuasive, and can convince you of anything. You believe the machine and prefer its sycophancy precisely because it is not a human who’s trying to nag and push you around, but some supposedly objective instance. One study just found that this seemingly neutral sycophancy increases attitude extremity and overconfidence. I suspect that all of these, the persuasivenes, the delusions, the overconfidence, result from the same basic psychological mechanism of an interpolatable archive overwhelming you with confirmations and new ideas in a quasi-neutral sound of an authority from elsewhere, a voice to which you’ll happily submit.

These cognitive hazards resulting from mental overclocking have their obvious counterpart in risks resulting from cognitive offloading by, not from introducing new ideas spinning your wheels, but quite the opposite. All those studies and examples about cheating, reductions in critical thinking or cognitive surrender belong here. In fact, a recent paper on a loss of persistence in problem solving explicitly states that those “effects are concentrated among users who seek direct solutions” while “participants who used AI for hints showed no significant impairments”. In other words: AI brainrot is cheater exclusive. Using AI to increase friction, to generate ideas instead of answers, seems fine, when you proceed with care and clear research subjectives to reduce risks of burnout or delusional spiraling. If you use it to reduce friction, to generate full essays and answers to cognitive tasks, it turns your brain into mush.

But those psychological effects of interpolatable archives as cognitive catalysts may turn out to be easily mitigated compared to those of more serious epistemological consequences.

Anachronisms

One inherent feature of AI as interpolatable archive is, well, that it interpolates all its contents. This always produces anachronisms: Your generated text contains data-traces across time, most obvious when you intentionally prompt for such a thing, like punk rock lyrics in the style of a shakespearean sonnet, or if you use image synthesis as a time traveler to generate selfies in ancient Rome, which Roland Meyer calls “pseudo-history” and “2nd order kitsch” produced by “nostalgia machines“.

History as an academic field is reliant on original sourcing and documentation in the fixed, written form, or on cultural artifacts retrieved by archeology — anything not based on these fixed records is decidedly not historic. We call it “writing history” for a reason. This requirement is fundamentally diametrical to the vibey output of AI which dissolves all those sources and fixed forms into a wobbly archive and puts out “fictional historical documents of historical lives”. As Meyer rightly states in the same essay, those fictions are based on the integrity of existing historical archives currently under threat by the Trump administration. Likewise, Russia is engaging in disinformation campaigns targeting training data with the goal to “embed lasting distortions in digital memory”, as one paper put it. This inherent impurity of AI, either steming from anachronistic interpolations or data contaminations, apparently renders LLMs unsuitable for academic history research.

So, given all that, what do we make of vintage LLMs like Talkie, a language model trained on data cut off at 1930? From the perspective of rigid academic history research, all Talkie can produce is pseudo-history able to skew how we relate to the past and shrink the space of possibilities in which we imagine them. In contrast, Ranjit Singh in Data & Society proposes a field he calls “experimental history“ and asks if we can build models “constrained by a particular historical moment, and then use those models to ask structured ‘what if’ questions”. He answers with a cautious “yes”, and the researchers in r/askhistorians are not convinced either that the anachronisms introduced by AI will have a lasting effect on recorded history, as wonky sourcing has a long tradition in the field.

Now, I’m a history buff. I read a lot of books on the matter, my favorite epochs are the middle ages and ancient greece, and when I’m tripping on history, I like to indulge myself in all kinds of material. In preperation for the upcoming adaption of Homer’s “The Odyssey“ directed by Christopher Nolan, I read all of Stephen Fry’s books on greek mythology and his takes on the homeric epics — topics i already was familiar with. One takeaway especially from Stephen Fry’s books is that the historic rigidness especially regarding Troy and the “Iliad“ is lacking: All kinds of researchers and writers across the field constantly contradict each other, tell various versions of events which may or may not have transpired. Stephen Fry wildly references all kinds of sources to produce a highly entertaining amalgam from historic records across the ages. What you get from these books is a pretty good feeling for what greek mythology wants to say about the human condition, and about the tipping point where mythology fades and historical record sets in. While I don’t really want to compare Stephen Fry’s wonderful books with synthetic output from LLMs, I do want to point out that his books are closer to edutainment than scholarship, and that much of the pseudo-history-”slop” on Youtube equally falls into that category. (I’ll willingly admit that these are not nearly as witty, fun and eloquent as Stephen Fry.)

In this Slop-Edutainment, the hyper-orality of the digital oracle transforms historical records into supra-historical story-patterns to support its mnemonic function. Humans in pre-writing greece attached mythic structures to historic events to remember them: Odysseus became not just a soldier returning home from a very long journey of war, but a hero defeating the cyclops, meeting godesses and beasts. They turned history into poetry. Similary, people generate clips of Timmy the whale in fictional settings featuring all kinds of fantastic exaggerations. Markus Boesch calls this “Brainrot as Anti-Content“, and i don’t want to downtalk this take, but this is also folk epistemology at work, creating mythic atmospheres about true events.

Educational material on history is choke full of vibe based material. Maybe not flying whales on TikTok, but textbooks do contain passages imagining the life as a peasant in the middle ages and there are so many historical documentaries about “everyday lifes” in various periods you can’t count them. We visit medieval fairs to cosplay history, to immerse ourselves in a past recreated on a spectrum of fictionality. Some of this material is more fictional than others, yes, but all of these are averages of the historic record. They are period vibes. You get a feeling for what it was like during the time, nothing more, and nothing less.

Immersing yourself in a fictional past like that sure isn’t the same as the rigid study of history, but it absolutely is educational. There is nothing wrong, when you study a subject, to get absorbed and grab every material you can get, including cosplaying a knight and generating averaged synthetic images and text with interpolatable archives, only to then read a book by a scholar. Handwaving these usecases away as “history-slop” because it doesn’t fulfill strict scientific requirements seems like academic overreach.

Wishfulfillments

Because interpolatable archive provide access to gaps in knowledge, they are inherent machines of wishfulfillment. Instantly, I can generate any interpolation I desire, in image, video, text and audio, and it comes at no surprise that some of the first instances of wishfulfillment gone wrong are nonconsensual sexualized images and deepfake porn.1

Myth and fairytales are stackeed to the roof with dire warnings of instant wishfulfillment, from The Sorcerers Apprentice who loses control over his magic, to poor Faust who sells his soul to the devil in exchange for transcendental knowledge about the world, with tragic consequences for everyone he meets.2

In another story, the horror classic tale of The Monkey’s Paw, the titular device grants three wishes to an elderly couple leading to the death of their son and his ghostly return. The story was masterfully adapted for new audiences by Stephen King in The Pet Sematary, and the parallels of what some today call Thanabots is striking: AI promises to resurrect the dead, in the shape of undead actors and chatbots trained on the diaries and blogposts of deceased loved ones. The psychological consequences for the process of grief are unfolding right now, and the longterm outlook of losing even the possibility of saying goodbye seems horrifying, when all of us leave promptable traces in embedding space, where everybody can summon the ghosts of everyone with a digital footprint.

In another tale with eery current undertones, the nymph Echo, cursed to only repeat utterances of others, falls in love with Narcissus, a guy so arrogant he wishes to only ever love his own image and was prophesied to live as “long as he never knows himself”. Narcissus rejects Echo, who retreats into a voice whispering his own words back to him. Narcissus now knows himself, becomes transfixed by his mirror image in a lake and starves to death. The parallels to the phenomenon of AI-delusions and spiraling are obvious.

The list of myths about wishfulfillment is long, and most of them have in common is the promise of knowledge. “The Sorcerers Apprentice” tries to bridge inexperience for the mastery of magic skills; Faust wants bypass spiritual labor for transcendental experience; The couple in “The Monkeys Paw” fills the hole left by their dead son; Narcissus shortcuts his search for ultimate beauty by looking in a mirror — all of them fail miserably, and sometimes deadly.

These gaps in knowledge, which those modern wishfullfilment devices are now able to fill, formerly required hard cognitive labor to overcome, often labor involving whole generations of networked scientists and artists. The discovery of these gaps in knowledge and how to close them often created a sense of awe, be it in art or the sciences, when something truly new touches us on such a fundamental level where we just have to stand back and take a moment to adjust to what we just experienced. Generating anything we wish for by wishfullfilment devices grossly diminishes this invaluable feature of discovery, and the loss of this sense of wonder is possibly one of the most dire consequences of interpolatable archives. When everything’s possible, nothing is interesting.3

Sure enough, myths and stories also tell happy tales of wishfulfillment going well, often after fun shenanigans. “Alladin and the magic lamp” comes to mind, where a boy with the help of a genie outwits circumstances and gains wealth and power. And in the fairytale of “The Wishing-Table, the Gold-Ass, and the Cudgel in the Sack“ (one of my favorites), a son of a tailor must get smart about the capacities of the titular items to retrieve stolen goods from the evil owner of an inn. He succeeds and they live happily ever after. What these stories of successful wishfulfillment have in common is that the mechanisms of wishfullfilment must be outfoxed, and that the riches they promise must be earned. AI brainrot being cheater exclusive is exactly these myths at work: If you instant-wish yourself good exam grades by cheating, all you’ll achieve is a cognitive clobbering from a magic stick.

Severances

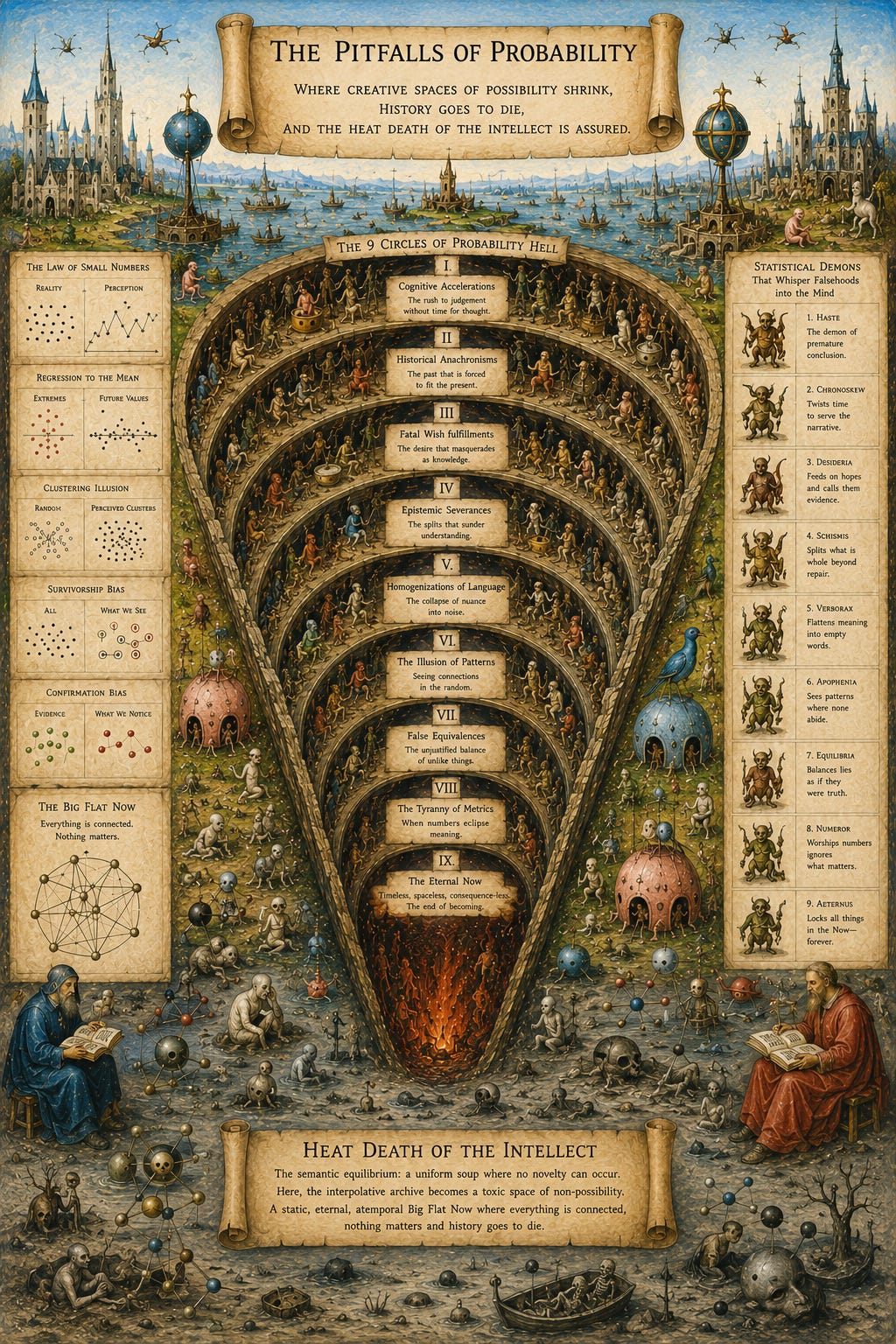

We already talked about how “AI dissolves the episteme, the based knowledge, including citations, sources and authorial intent” into new AI-mediated digital oralities, where tracable reference-based epistemology makes place for an epistemology of the oracle. What we know no longer can be backed up by definite citations, links, and attributions allowing you to trace the origin of an idea, but becomes a feeling for a vibe, interpolated from statistical patterns derived from many of those sources, including unrelated and anachronistic references. And because the “rigid orders and shapes” in AI models are controlled by corporations and the curators of datasets, the severances of factual grounding beget new epistemological power structures. Supposed that a lot of information processing in the near future will be AI-mediated, these new power structures will control the space of possibilities in which we communicate and think.

In Borges story of “The Library of Babel”, the fanatic sect of the Purifiers roaming the infinite archive of all possible books, is hell bent on burning volumes they consider to be false and useless, nonsensical or divergent from their norms. In LLMs these Purifiers appear at various infliction points: During the labelling of training data scraped from the internet, where new purifiers clean up raw datasets, sort the good from the bad, delete the hateful and the illegal; During RLHF-training, where the raw Shoggoth of the interpolatable archive get’s shaped into aligned chatbots which won’t offend (so they hope). Then the tamed model is further purified by constraining it with system prompts and constitutional alignment, and if the interpolatale archive then still connects data points into bad interpolations, the corporate owners of the models will further adjust their models to their morals and politics. Last but not least, the users themselves purify the archive by scripting sophisticated user prompts, and ultimately, the prompt and context window constrain the embedding space further to generate the final output. In all these steps the space of possibilities of the interpolatable archive shrinks by purifying, until the annoying “this is not X, it’s Y” appears on screen.

Former epistemologies, systems of knowledge, were constrained by what Michel Foucault in The Archaeology of Knowledge calls the “positivity of discourse”, a “historical a priori“ which lays the foundation for the “condition of reality for statements”. By that, he means what can be said, e.g. in fields of the natural sciences, is shaped, over time, not by single authors but whole “unities” of “oevres [sic!], books, and texts”. These “a priori“ were not ahistoric nor atemporal, some monolithic force from the outside, but are actively shaped by discourse practice and the power relations within a field. The sum of all of this, the discourse practices shaping the “historical a priori“ and “positivity of discourse”, establishes the space of possibilities of what can be articulated. For Foucault, this is the archive: “The archive is first the law of what can be said, the system that governs the appearance of statements as unique events.”

The problem of the severances of epistemic grounding now becomes clear: Where the episteme formerly was subject to discourse practise and “so many authors who know or do not know one another, criticize one another, invalidate one another, pillage one another, meet without knowing it and obstinately intersect their unique discourses” in a web of traceable sourcings and references, and where the “a priori (…) is itself a transformable group”, the episteme of the interpolatable archive is a free floating version of Baudrillards Simulacra, an inversion of map and territory, where we navigate a map of hyper-reality to project meaning into an interpolated output cut off from epistemic grounding. And this Simulacra is controlled by the new Purifiers at every stage, by the hyperscalers, the selection of datasets, by the engineers employed by AI-owners4. AI operationalizes Foucaults “historical a priori“ and hands over the keys to the billionaire class, who gain power over the limits of what can be thought.

In H.G. Wells Time Machine, this is brought to the extreme end of its logical conclusion: While the Morlock control invisible underground machines, the Eloi enjoy a careless life of blissful ignorance on a seemingly utopian surface. The concentration of power over the episteme runs risk of resulting in a fork of truth, where an unknowing mass consults interpolatable archives controlled by invisible rulers, enjoying free tiers of endless synthetic entertainment feeds shot through with algorithmic noise and unverifiable, algorithmically generated meta-knowledge of plausible half-truths, all while the rich pay for premium models trained on clean, traceable, verified data, or enjoy handmade and authentic cultural artifacts which may even challenge their presumptions and intellectual capacities. Ofcourse, those authentic intellectual challenges won’t come cheap, and you and me surely won’t be able to afford them.

Homogenizations

While all of these are serious problems, in the long run, the most serious of them all might be the issue of sameness. AI-output may flatten human creativity without us even noticing, because this homogenization plays out on a collective level, while the individuals’ creativity actually benefits.

Depending on which theory of creativity you subscribe to, there are different forms of creativity. I’ll stick with three: Interpolation, Extrapolation, and Innovation.

Interpolation calculates averages from data points to produce something in the middle. If your values are dogs and birds, your average is a dog with wings, or a bird with a very long tongue. Extrapolation means breaking the boundaries of your dataset: If your values are dogs and birds, you can extrapolate a mouse, or an owl, but you will stick to the rule of “animals”. Innovation means breaking that rule, or injecting new heuristics. From a dog digging a hole in the ground to bury his bone, you may innovate an excavator by applying all kinds of rules from different domains (engineering, transportation, building tools) to an animal, and come up with an entirely new thing.

Language models can do interpolation, but they can’t extrapolate or innovate beyond their embedding space produced from training data. It may feel that way though, when the machine comes up with surprising, sometimes baffling results. This is what I call the illusion of extrapolation: An amount of training data so huge and the latent space derived from it so vast, featuring so many parameters in so many combinatorial possibilities, has to produce the feeling of extrapolation, or even innovation, on the level of the individual user, because no individual alone can ever know all those combinational possibilities. This illusion of extrapolation already is on display in papers showing how AI use increases creativity on the individual level, but decreases diversity in collective output, reported first in 2024 in a paper comparing creative writing in human shortstories and AI-output, then in 2025 in a paper about AI-augmented research. A recent review of the literature confirmed those findings. What feels new to an individual, what’s new to a culture, and what’s new in principle are very different things. Interpolatable archives increase the first, decrease the second, and can’t do the third.

Henry Farrell put’s it well: “the more that LLMs are employed in the ways that they are currently being employed, the more concentrated science will be on studying already-popular questions in already-popular ways, and the less well suited it will be to discovering the novel and unexpected.” Everybody becomes slightly more creative, but we all sound like the same creative person.

I already talked about the loss of awe above when discussing the effects of AI as machines for instant wishfulfillment, where they devalue novelty into a mundane readymade-on-prompt lacking the ability to produce a sense of wonder. The same effect, ofcourse, comes with the homogenizations of creative fields: Not only can’t we marvel at our own synthetic output because frictionless wishfulfillment feels unearned, we also diminish our collective ability to be left speechless in the face of the “novel and unexpected” by reducing it into dull sludge.

This convergence on the already-popular vanishes the fringes and washes out the long tail distributions of its dataset. Like the widely reported phenomenon of model collapse, where LLM output converges into increasingly narrow averages, Andrew Peterson identifies the same in a collective knowledge collapse. Writing in the Open Society Foundations newsletter, Bright Simons lists what these tail distributions contain: “Minority viewpoints, rare knowledge, unusual formulations, (…) the traces of intellectual disagreement, of minority expertise, of Cassandra warnings, of institutional friction, and of the awkward and valuable fact that different people know different things and express them differently (…) in other words, the signature of social complexity. Model collapse is social mind compression presented as a technical phenomenon.”

Thought through to its extreme end, this results in a heat death of the intellect: In Claude Shannons information theory, information is quantified by the volume of “surprise” in the outcome from a specific event in the world. If a message is completely predictable, its informational value is zero. LLMs are fundamentally deterministic, the randomness of its outputs is generated by a meta-parameter called temperature. If you put that temperature at zero and reduce the probability distribution of the next token to its absolute minimum, the interpolatable archive becomes a perfectly deterministic machine: Each prompt will then generate the exact same output. No alarms and no surprises. But even with stochasticity plugged in, the models converge towards the median. Applied to creative fields of discovery, this means that those fields approach a semantic equilibrium, a uniform soup where novelty grinds to a halt. The interpolative archive becomes a toxic space of non-possibility, a static, eternal, atemporal Big Flat Now where everything is connected, nothing matters and history goes to die.

But we don’t have to go full theoretical “heat death of the intellect” to see how this can lead to bad outcomes.

German thinker Michael Seemann, in context of Xs Grok-chatbot turning into Mecha-Hitler and later referencing an article from Bruce Schneier about the homogenization of language, coined the term of “Weapons of Mass Speech Acts”. The concentration of power over the episteme in the hands of a few, in a society saturated with information retrieval mediated by interpolatable archive, may impact the thinking of its users at scale. Already, a study found that “few weeks of X’s algorithm can make you more right‑wing“. While this hardly can be blamed on Musks tweaks at the supposedly woke bolts and nuts of its AI chatbot alone, it illustrates how the homogenization of language putting limits on what and how we speak and ideate about things, in the hands of politically motivated ideologues can and already is used for influence operations.

I may personally not be partisan enough that the prospects of being influenced by conservative talking points on a platform owned by a shady billionaire fill me with nightmares, nor will the confrontation with a braindead partisan chatbot make me sweat. But: A new study from Petter Törnberg found that the sycophancy in LLMs pushes it’s responses into political preferences it asumes in the user: “Political bias in LLMs is therefore not a fixed point on an ideological scale but a response profile”. Studies showing a leftwing bias in chatbots really show something else: the effects of sycophancy, not actual political biases present in the model. The LLM figures that it’s being tested by researchers, asumes leftwing tendencies in them because academia is famously more progressive than the rest of the population, and answers like the good sycophant that it is.

In another new paper about chatbots pushing confirmation bias, researcher Jay van Bavel finds that AI is “especially effective at generating elaborate justifications for what people already — or wish to — believe.” Together with the results above, this means that sycophant AI will confirm any political preference it asumes in a user, and become a universal Meta-Fox News for everyone, pushing users into an ever more narrowing mental corridor by homogenizing their language. In other words, this streamlining of thought by chatbot can radicalize everyone, even with models not Musk’d into Mecha-Hitlers.

This is why the homogenization of language and thought, in politics and all other realms, to me, is one of the greatest dangers coming out of this technology.

It is therefore imperative that interpolatable archives stay one tool among many, that our usage of expanded mind technologies stays diverse, so that the idiosyncratic, the fringes and the edges, the individual in all its complexities can be preserved, because they are of crucial importance for human systems to thrive.

This already causes harm likely in millions of cases, and this doesn't even touch on its use as a psychological weapon against female politicians and activists. From the hundreds (thousands?) of nudify-apps to specialized service accounts on Telegram with which you can, if you can lay your hands on images, undress anyone. A recent study found “35,000 publicly downloadable deepfake model variants (…) fine-tuned to produce deepfake images of identifiable people, often celebrities”, the overwhelming majority targeting women. In the larger picture, it also creates a new form of a male swarm gaze, a whole new class of discrimination emerging from the digital, about which i wrote here, after Elon Musk industrialized and monetized it. The problem is growing, and what we know surely is just the tip of the iceberg.

Fausts bargain with the devil plays nice with the fantasies about the singularity: Faust is aware that if the devil fulfills his wish of total knowledge, he will spend eternity in hell after he dies, so he demands this total knowledge to lead to an eternal moment of pure bliss in which he can reside forever. While Faust hopes that this will never happen so he can keep his soul, this also sounds to me a lot like the scifi-induced hubris coming out of the more AI-pilled fractions of Silicon Valley, where people seriously hypothesize about fusing consciousness with the machine, mind uploading and immortality. All of these are faustian delusions, and as everybody knows: the only faustian bargain worth a dime makes you play the blues like the devil.

In a thread on Bluesky, I expanded on this loss of awe and explained how it relates to Hollywood as a “dream factory”, shamanic rituals and dreams as cognitive mechanisms against neural overfitting, in which I conclude: “Mark Fishers capitalist realism asks the question why we can’t imagine alternatives to neoliberal status quo. One answer might be that mass produced dreams-on-demand lower our capacity for the feeling of awe, and in consequence our ability for generalization in collective cognition. AI wishfulfillment combined with platform incentives, in which only the crystalized memetic fields become visible, are the latest iteration in a process that has been running for a long time now, and we can see its effects everywhere in atemporal aesthetic flattenings. How we can preserve the shamanic function of awe and surprise in a world dominated by the memetic forces of AI wishfulfilled readymades, I don’t know. I just know that we dearly need them.”

Nowhere is this mechanism more on full display than in the epistemic onslaught of the Trump administration and the destruction spree of the former Department of Government Efficiency (DOGE) (achived) deployed by Elon Musk.