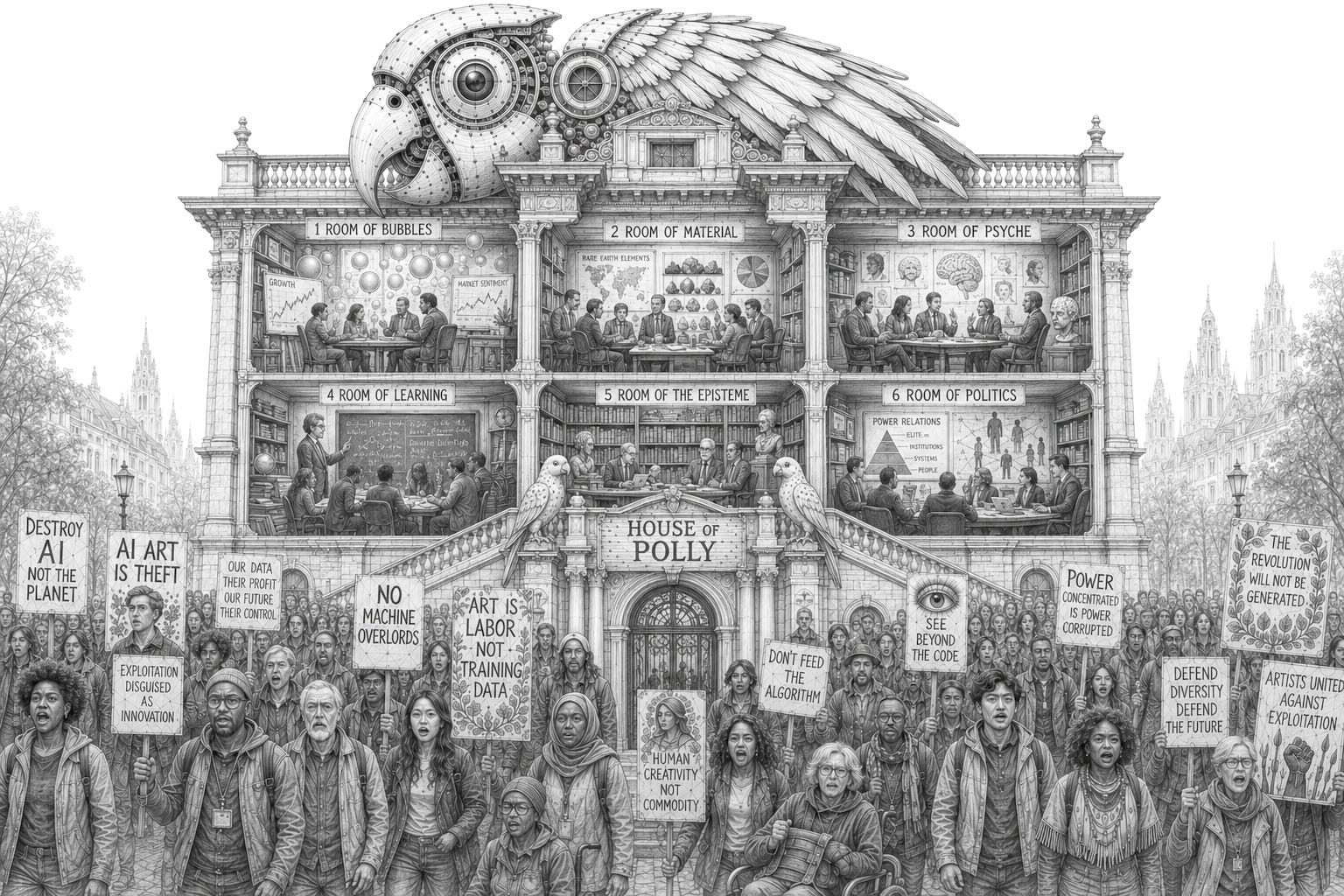

The House of Polly

On Interpolatable Archives, Part 4: Useless Bullshit, Meaning Of The Poetic Kind, Sloptimizations, Thinking In Vacuums, Remainder Criticisms and Games of Chess

This is the 4th and final part of my series of essays on “Interpolatable Archives”, a term I’ve been throwing around for quite some time when talking about Language Models and Artificial Intelligence.

Part 1: The Skeleton Library - Compulsions to Connect, Warburg, Borges and Goldsmith, Cultural Technologies and Digital Oralities

Part 2: Explosion Drawings - Science Sans Discoveries, Textrotating Cognitive Catalysts and Exploding Your Intelligence by the Method of Warburg

Part 3: Pitfalls of Probability - Accelerations, Anachronisms, Wishfulfillments, Severances and Homogenizations

Part 4: The House of Polly - Useless Bullshit, Meaning Of The Poetic Kind, Sloptimizations, Thinking In Vacuums, Remainder Criticisms and Games of Chess

“These are the Talking Rings?”

”Yes.”

”They speak, hm? Of what?”

”Things no one here understands.”

”Make them talk.”

(The Time Machine, 1960)

What I tried to achieve in this series of essays is to look at the chances and risks of AI as a cultural technologies and see what remains, once you strip them of cognitive woo and singularity myths: A technology that provides access to an dissolved, interpolative archive through textual interfaces. These, pushed to their extreme ends, result in a homogenization of language and an ever narrowing space of possibilities, what I called the “heat death of the intellect”.

All of thes beforementioned points of critique are valid, and I didn’t even touch on problems not directly following from the interpolative nature of AI, like all the issues with labor, the environmental impact of energy and water consumptions or economic bubbles which may or may not exist.

Interpolatable archives as they are rolled out now, based on scraped data from the web and cleaned up by exploited gig workers all over the world, are a far shot from their theoretical possibilities. They contain all the discriminations and biases present in human conversations, and generate what is always already reproduced, erasing the marginal in favor of the statistical norm. The corporations running the algorithms extract and monetize the commons, while the problem of copyright in an interpolatable information space might very well be unsolvable: Who do you want to pay, when every output contains the activations of hundreds or thousands of artificial neurons each of which point to patterns of tokens within sentences within publications that deserve to be compensated? It will take decades for us to figure out fair proceedures to handle this.

There are, however, prominent criticisms which fly out of the window once you get rid of the illusion of AI as cognitive agents and view them as cultural technologies.

Enter the House of Polly

Useless Bullshit

Interpolations in such quantities of billions of parameters will always produce fuzziness and synthetic artifacts are never not interpolated. But the “blurry JPGs of the web” become sharper by the day and even blurry JPGs are identifiable. The JPGs work, especially when you can layer, blend and bend them on top and into each other, and it is sheer foolishness to insist they are “useless”, when every day, users make the very different experience of a very useful piece of software.

I highly recommend reading the essay of cosmologist Natalie B. Hogg titled “Find the stable and pull out the bolt”, in which she describes her journey from rejectionist critic to reluctantly embracing the possibilities and finally becoming cautiously optimistic about the tool. Seasoned coders report productivity gains of up to 100 times their former output, and though I understand and somewhat-subscribe to the critique as articulated by Cory Doctorow, who wrote about how “Code is a liability (not an asset)“, even he understands that those increases in productivity in coding can’t be handwaved away.

Moreover, the critique of reliability seems overstated, and the boring gotchas in critical AI discourse may soon become a relic of the past, just like the old office jokes where we machine translated text with early instances of Google Translate back and forth until they became gibberish to our delight.

A recent research conducted by the New York Times found the results of Googles AI overview showing a 90% success rate, and many pointed out that this still means millions and millions of cases of false information spread by the market leader in search. This is not wrong, but when we compare a 90% success rate in information retrieval with those of a classic search engine, how many of these search results are useful and accurate for the specific query? In my estimation, this success rate is far below 90%, even for un-enshittified platforms.

A prominent trick of internet search is to skip the first page of results because they are useless to your query. Compared to this, results from AI overview and a 90% success rate seems like no small improvement. The problem then is not accuracy, but the neutral tonality of the “authority from elsewhere” in which the remaining 10% of false information is delivered. Conversely, this hardly renders the product “useless”.

Emily Benders position that a supposed “parrotness” of synthetic text shows their inherent meaninglessness seems equally outdated. Just a few days ago she defended her positions and I find myself agreeing with surprisingly many of her arguments, except for one crucial point, arguably her central claim.

She writes that

language models don’t understand text they are used to process, because language models only ever have access to the linguistic form (i.e. spellings of words) in the training data. (...)

we (define understanding) as mapping from language to something outside of language, and show that systems built only with linguistic form have no purchase with which to encode (“learn”) such a mapping.

But latent space have such purchase, as they pick up meaning from the patterns encoded in linguistic practice, which, crucially, goes beyond spelling, grammar and syntax. You may call this form of algorithmic understanding of meaning limited, compared to the human understanding of the world, which is built from a dataset much richer and diverse than the digital mimicry. But it is not the case that there is no understanding at all. Bender, a bit hesitantly, concedes to that point writing about a “thin kind of technical ‘understanding’” which might be present in the models, which seems to contradict the original paper of hers and Timnit Gebru, where they write that “Text generated by an LM is not grounded in (...) any model of the world, or any model of the reader’s state of mind” (emphasis mine). But even when those synthetic models of the world are low resolution “blurry JPGs” compared to ours, they do exist.

“Polly wants a better argument“, as a recent critique of the parrot-argument states. While this text argues that LLMs can encode meaning because they are trained multimodal and that human feedback loops ground models in extralinguistic reality, the convergence of representations across models adds another blow to the parrot-metaphor: In the first part of this essay when talking about Borges, I made the claim that the “only thing relevant for the LLM is not truth, but the narrative consistency of its vector.” What I left out is that this vector is informed and shaped not just by a prompt, but also by the millions of attractor basins encoding the “external forms of a myriad traditions” we talked about earlier.

Meaning of the poetic Kind

In the introduction headlined “AI as culture”, in his book “Language Machines”, Leif Weatherby writes about how

the implementation of contemporary language generators matches the theory of language that European structuralism advanced nearly a century ago, suggesting that language is complex, cultural, and even poetic first, and referential, functional, and cognitive only later. This poetic language is not only computationally tractable but turns out to be the semiotic hinge on which an emergent AI culture depends.

These poetics (the structure and principles of poetry) picked up by the models are precisely Henry Farrells “tales that are sung”, where “LLMs are not the singer (...) but the structural relations of the tales that are sung (…) we can now listen to and even interrogate (…) without immediate human intermediation.”

The machine lacks the subjective intent of a cognite agent grounded in social reality, but it does encode meaning and valid semantic representations of the world in the shape of poetic representations, the structural vibes, picked up from human practice of the linguistic form. Or, as Allison Parrish put it back in 2021: “a language model can (...) write poetry, but only a person can write a poem.”

This is a fatal blow to the bullshit argument. As per Harry Frankfurt, bullshit is the use of language with “a lack of connection to concern with truth” and an “indifference to how things really are”. But the models do show a differentiation between how things really are and how they are not, which you might aswell interpret as a “concern with truth”, as such concern is present in the poetics of the language it is trained on. Insisting that such output is meaningless bullshit from a metaphorical parrot then requires a cognitive agent that isn’t there. LLMs can’t be “bullshitters” —itself is an anthropomorphization— because bullshitters require agency to bullshit. The bullshitter is always the user, never the interpolatable archive she uses to bullshit.

Adding insult to injury is the very probable outlook that the severances of epistemological rooting we talked about may very well turn out to be an issue solved as soon as interpretability improves and the black box myth finally disentigrates into hot air. As Shalizi put it at the first conference for cultural AI1: “GenAI is information retrieval and synthesis. With the right tools + access, we can quantify the influence of each training document on every response”.

Those parrot-metaphors and bullshit-claims are arguments aimed at misguided comparisons to human cognition and the resulting hype and marketing lingo, and as such, I can relate to them. But as an argument against meaning encoded in latent space or the capacities of language models, the value of the parrot/bullshit-arguments is nil.

Sloptimizations

Accordingly, and to be frank, I find the critique of “slop” to be banal. The world has been full of standardized and optimized language since we talk to each other, mimic successful speaking patterns and the ancient greeks invented schools of rhetorics for politicians to convince and persuade (or to deceive and bullshit) their publics.

George Orwell already complained in his famous essay on “Politics and the English Language“, that “(a)s soon as certain topics are raised, the concrete melts into the abstract and no one seems able to think of turns of speech that are not hackneyed: prose consists less and less of words chosen for the sake of their meaning, and more and more of phrases tacked together like the sections of a prefabricated hen-house.” This is as much of an assessment of “slop” as anything you can find on Bluesky these days, and I find the standard critique of sloptimized language to be quite sloppy itself.

People sure like to romanticize originality, where the history of prose is filled to the roof with ripoffs, amalgams and chimeras, from the “Devine Comedy” which happily mashed together roman and greek mythology with christianity and interpolated the existing cultural archive of its time to send Dante’s personal enemies to hell, to all the examples J.W. McCormack uses to illustrate his point in the delightful piece on Neverending Stories about LLMs, copyright and originality, in which he states that “Writing was destined for automation, from the punch cards of Charles Babbage and Ada Lovelace to Turing machines and, hell, Choose Your Own Adventure—but an AI can’t ‘know’ what makes a good story any more than CAPTCHA knows what does and does not make a motorcycle. What it can do is meet our expectations based on pattern recognition.”

If the market for young adult fantasy romance novels hyped up on booktok is any indication, those expectations are easily met, and I consider such pulp as machine generated slop regardless of its origin in a human mind. I refuse the “false choice between refried ectoplasm and a serial aesthetic in which mass media has stabilized redundancy”, as McCormack puts it in his piece. I might be a rejectionist after all! I make no difference of sloptimized output from the organizational artificial intelligence that is a corporation, and the sloptimized output from the artificial models of language. To be honest, I sometimes consider human slop often much worse a case compared to those synthetic ones, precisely because it does contain the deceiving intent a machine lacks.

I can’t remember such public hostility to these synthetic, polished, median texts of human origin coming out of public relations and advertising agencies or politics, and surely you’ll find more sinister examples in those places on which to feed ones anger than getting worked up about “shrimp jesus”. Get real.

Thinking in Vacuums

But even the more serious points of critique we discussed —the accelerations, anachronisms, wishfulfillments, severances and homogenizations— presume usage of AI in a vacuum for them to unfold their toxic potential in full. Sure enough, if, and only if interpolatable archives become the primary way of information retrieval, of sharing knowledge and shaping public discourse, epistemic grounding is severed, we risk spiraling into bespoken mirror worlds, science stops being science, history turns into pseudo-history and LLMs turn into weapons of mass speech acts. In reality however, this never happens.

For me, I use books physical and digital, I read articles, papers and essays in print and on screen, follow the news and read at least somewhat across the political spectrum, I listen to podcasts, talk to experts and non-experts of all kinds, and sometimes I use latent spaces to explode my ideas and explore them by interpolatable archive. Occasionally, I touch grass. None of these alone shape my ideas — it’s always all of them. This is true for most of us, and while this may not hold for your hardcore MAGA-pilled uncle, a study found that “dialogues with AI reduce beliefs in misinformation”. So there’s that.

Returning to the subject of AI-generated pseudo-history, for instance, then yes, we might tune in to AI-videos illustrating everyday life in ancient Rome on Youtube, and indulge ourselves in an hour of averaged synthetic imagery from a seemingly distant past that is an anachronistic interpolation of data-points from across time: moving pixels generated from stock video, illustrations and memes. But if I’m interested in such clips, I’m likely to watch documentaries featuring real historians as well, listen to podcasts and read books about Pompeji, and maybe recreate medieval food as a hobby. Hell, I might even pay visit to a museum. Given that new media never fully replaces but always complements what came before, I’d suggest that our understanding of history and our place within will survive interpolatable archives just fine.

Further, in a resent experiment with “the use of LLM hallucinations to ‘fill-in-the-gap’ for omissions in archives due to social and political inequality”, the researchers “validate(d) LLMs’ foundational narrative understanding capabilities to perform critical confabulation” and found that “controlled and well-specified hallucinations can support LLM applications for knowledge production without collapsing speculation into a lack of historical accuracy and fidelity”. This is in line with projects like Historica, which aims at filling “historical silences” by interpolative archive, and make visible the “absence of records” which are “the result of systemic exclusion, where certain voices are ignored or erased to maintain power”. The use of vintage LLMs to make visible the “historical silences”, especially when combined with an ensemble of diverse material, seems a viable and rich way to educate yourself and others about history. The risks of anachronisms present in language models, again, have more to do with their use in a vacuum, than with the anachronisms themselves.

What is needed are norms informed by AI literacy declaring interpolatable archives as one tool among many, a device for general research to get a general feeling for the general vibe of the subject or historic period at hand. Something that can’t replace sources and books grounded in verified facts, but complement them, giving you hints at answers, not answers themselves, to be digital assistants supporting you inquiry, not slaves fulfilling your every wish.

But those are questions of interface design and cultural practice, and the current failings of design choices are not first principles on which you can base an absolute rejection. Sometimes I can’t shake the feeling that thinking in vacuums kills critical thinking much more than AI.

Remainder Criticisms

Viewing AI systems as interpolatable archives which are one tool among many, besides their material implementation, then leaves not much on the table to criticize thoroughly, it seems, and I confess that I’m getting carefully optimistic about the technology. But I won’t deny the inherent dangers of their usage in bureaucratic decision process, where we want decisions to be made on a case by case basis with human judgement, not broad judgements by algorithm. And we surely don’t want some vague decision making based on vibes in targeting systems in warfare, as it has already been deployed by the Israel Defense Forces in Gaza.

Productivity gains for now are debatable, as are the effects of AI on the job market. As it’s currently realized, AI is an extractive technology exploiting intellectual labor of the commons for the gains of corporations, largely without compensation. I also won’t deny the open questions of their energy and water consumption. I’m not convinced by either side of the argument and the amount of conflicting signal coming out of that discourse forbids clear positioning. Yes, those corporations training frontier models are constantly lying and greenwashing their energy consumption, not to speak about Jevons paradox of rising demand nuking gains in efficiency.

On the other hand, the operation of AI systems is getting more energy efficient, and developments in local LLMs point towards a future beyond monolithic hyperscalers, where you may even train your own 100 billion parameter models on a single GPU. These developments make me believe that the current state of AI is comparable to that of the pre-PC-mainframe age of computers, where huge warehouses full of hardware were necessary to run the same compute which you, today, carry around in your pocket. For comparison, you’d need roughly half a million IBM System/360 Model 75 to equal the processing power of your iPhone, and 35 million of those room sized mainframes to match it’s neural engine GPU. Five of those were used to run the whole NASA Apollo program. Given that you can shrink the information processing power necessary to land you on the moon by a factor of several millions and put it in everyone’s pocket for you to scroll through brainrotting videoclips on TikTok, we probably will see similar effects for putting local artificial intelligence on your laptop.

Yet, the fact remains that AI’s energy and water usage in a world facing climate change is an imperative critical issue at an critical point in time where carbon budgets are running out. This year, a Super El Niño is shaping up, and in mid april, I’m already tanned like in mid June. It’s getting hot.

So, if these points —labor, energy and bureaucracy— remain once you view AI systems not as magic cognitive entities but the cultural technology of interpolatable archives, one has to ask themselves if they are worth it.

Even if we can mitigate the averaging effects on creativity by not using them in a vacuum, even if we can workaround epistemic severance with RAGs and interpretability, find safety guardrails against delusional spiraling and turn those cognitive catalysts into combustion engines for knowledge, the open questions of what they will do to the inherent dignity of labor, to the democratic process and the environment demand answers and solutions I can’t provide. For now, I have to live with the ambivalence.

But what I can do is to place interpolatable archives on a lineage of the cultural evolution of media innovations: With the development of language we introduced the new regime of social externalization to information processing; Writing and the alphabet introduced permanence; The printing press in China and Gutenbergs moveable type introduced one-to-many distribution and the edit respectively; TV and radio introduced realtime broadcasting, while the internet confronted us with the regime of the network. AI now is introducing another new regime of information processing: the automatic interpolation of data.

From this perspective, AI is a normal cultural evolutionary step in information distribution and processing, and like every normal cultural evolutionary step in information processing, they will change everything. None of the transitions mentioned went over smoothly, and so will this one.

We’ve successfully integrated cognitively disruptive innovations in the past, and we will do so today. The disruptions of interpolatable archives run deep, both on individual and societal levels. But, and I hesitate to make this point, I’d also argue that AI in the shape of interpolatable archives as the latest step within the lineage of cultural evolution are, indeed, inevitable. Each of those steps within that lineage was inevitable from the perspective of cultural evolution — as is the interpolation of big data by algorithm on inquiry: If we get the affordances —the data, the compute, the algorithms— then evolution will do what it does and squishy latent spaces will appear.

From this viewpoint, shortsighted rejectionist critiques of AI as “the tool of the oppressors” or a “fascist artifact“ to me read like a rejection of the invention of cuneiform writing in Mesopotamia because Hammurabi used it to manage argiculture and land ownership to oppress the people. Accordingly, the rejectionism coming out of the majority of critical AI discourse seems to be mainly aimed at corporatism, not the technology itself. I will admit that those critiques do have momentary merit, but I also think they do not justify an absolute dismissal of AI technologies as it is on display in the discourse, because what is not inevitable are the organizational principles of their implementation. Neither the extractive practice nor OpenAI nor xAI nor Palantir are inevitable. They are companies and subject to regulation in a democracy, full stop. Nothing prevents us from running those new archives like national libraries, for instance. But imagining such a political project is not possible on a rejectionist stance.

How about a nice Game of Chess?

Coming back to the question posed earlier of what science is here for, if not discovery: The scientific method is an algorithm. A researcher posits a hypothesis, tests it against reality, and it holds or not. If it holds, it becomes a valid theory, and if not, it’s falsified and kicks the bucket, serving as inspirational fodder in cultural memory for generations of scientists to come. This way, we get ever more detailed descriptions of the world in various languages, often in the language of mathematical formulas, or the playful use of words in philosophy and sociology. A lot of yet unrealized descriptions encoded in the various languages of science now lie dormant in latent spaces, waiting for their Liam Price to discover the prompt. In the simplest terms, this means that the algorithm of the scientific method has been updated.

Now, a scientist may posit a problem and throw it against latent space to see if there is a seemingly valid hypothesis. She then tests that hypothesis against reality, and it holds or not. With AI, scientists don’t construct a hypothesis against an open problem, they identify such problems and consult synthesis as a service. This update may devalue discovery itself, and shrink creative space of possibilities, as the price we pay for speeding up and automatizing the closing of knowledge gaps.

So, what is science here for, if not discovery? To expand our curiosity and explore. Like for the problem of homogenization, it is therefore vital that science is no longer conducted in the vacuums of fields. What automatic discovery demands is interdisciplinary research: Crossing the streams to make formerly unconnected fields pollinate each other and push the space of scientific inquiry beyond existing training data, to break the boundaries of an interpolatable space and make extrapolation and innovation possible.

Revisiting the defeat of Lee Sedol by AlphaGo and his subsequent departure from the sport, and the rise of collective creativity in human Go players in its aftermath, one of my favorite examples of human antifragility in the face of superhuman machines is the game of Chess. For 30 years now we’ve had computers beating the best human players in the game, and the result has been neither the devaluation of the game, nor human retreat. The result is human-machine cooperative training techniques leading to massively improved skills.

Carlsen is using the chess engine Stockfish to analyze his moves and speaking about how Googles AlphaZero “destroying” Stockfish in 2017 affected his game, Carlsen said: “I have become a very different player in terms of style than I was a bit earlier, and it has been a great ride.” Today, “Magnus Carlsen dominates the computer era by deliberately playing sub-optimal moves to drag his opponents into the unknown”, because AI introduced Carlsen to “wild ideas“:

According to his coach, the change came from wild ideas AlphaZero uncovered: sacrificing pieces for long-term advantage, pushing the rook pawn aggressively, using the king as an active fighter. Things human experts thought were unsound, but the engine showed they can work.

Every chess engine these days beats any human player with ease. Yet we keep improving and playing for the sake of the game. I’d suggest that similar “wild ideas” will emerge for the use of interpolatable archives in education, science and the arts.

The one thing that made humanity an evolutionary success is our ability for social and cultural learning: To share intentions and and iterate knowledge across generations. All progress relies on this. So, even if all the criticisms apply, having a technology which automates the interpolation of knowledge is a promethean gift not easily brushed off. Interpolative archives are not just a mere “scam”, and “Destroy AI” may be a fun shirt, but we shouldn’t destroy interpolatable archives wholesale. I get bad vibes from people trying to burn archives.

The task then is not to reject, but apply democratic process to regulate, demand fair shares and be careful to preserve our collective ability to innovate — to become neither machine-controlling, exploitative Morlock nor ignorant Eloi feeding on epistemic plastic, but to find new norms in the acquisition and sharing of knowledge. To stay curious in the face of these new archives, and make them talk.

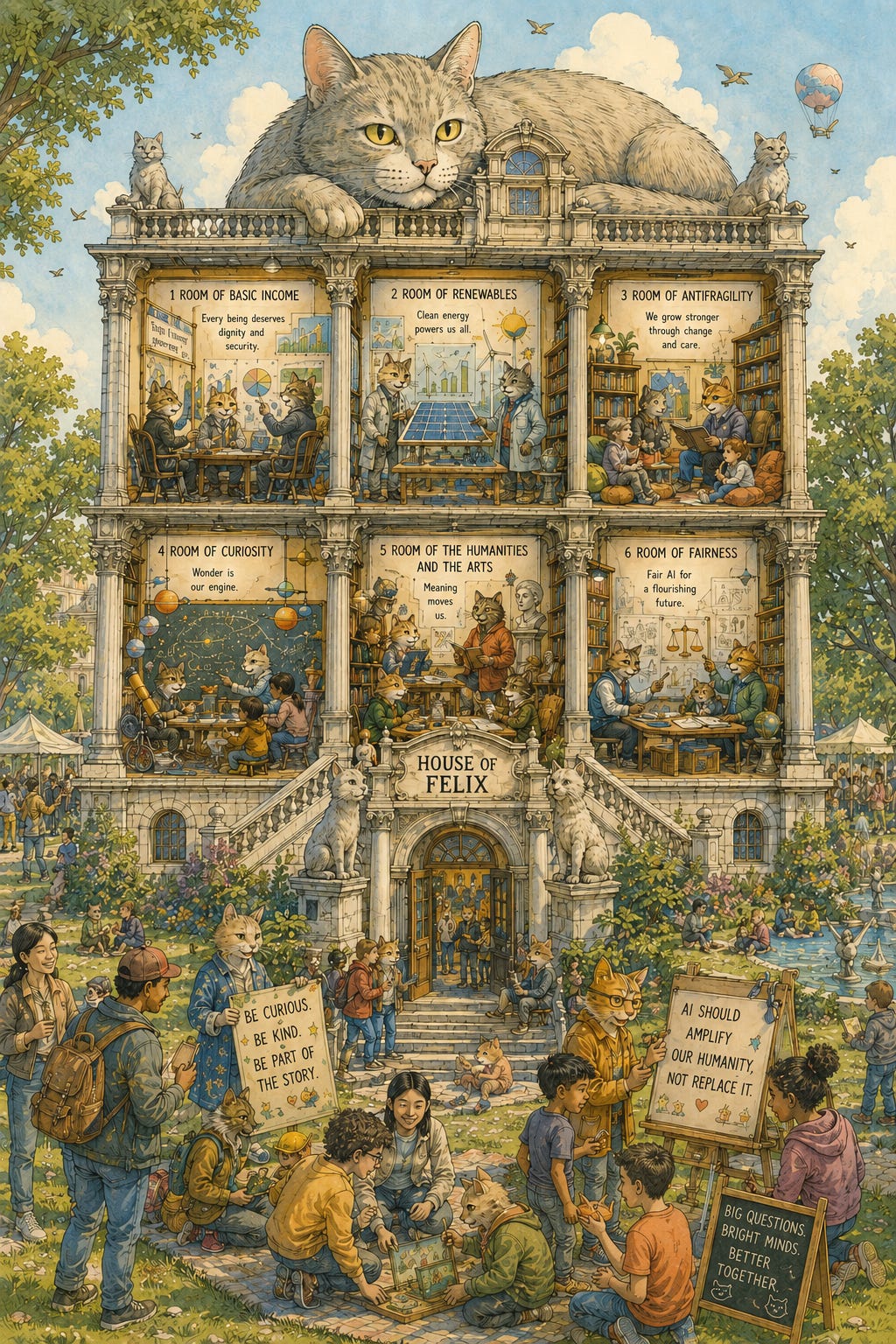

So, as a final peek into the possibility space of interpolatable archives, I wanted to see what the space of an AI discourse might look like if it found a new equilibrium beyond rejectionism and hype, that is curious about the cultural impact of the technology and perceives interpolatable archives not as a threat, but as an opportunity for play.

Enter the House of Felix.2

All talks from that conference on “Cultural AI: An Emerging Field” are on Youtube in two parts: Part 1 featuring talks from Leif Weatherby, Tyler Shoemaker, Henry Farrell, Benjamin Recht, Lily Chumley and Fabian Offert, Part 2 featuring talks from Cosma Shalizi, Mel Andrews, Wouter Haverals, Ted Underwood, Nina Beguš and Danya Glabau. I quoted some of these guys extensively throughout this essay.

In the same vein, the initiative Doing AI Differently works on “challenges traditional approaches to AI development by positioning humanities perspectives as integral, rather than supplemental, to technical innovation.” They are doing a workshop in summer 2026 to find “A positive vision for culture in AI”. The Cultural AI discourse is gearing up.

I'm getting the feeling that this is a good synthesis of Critical AI and research to make sense of the possibilities without losing the perspective on bad outcomes. Compared to a critical discourse stuck in repeating talking points and a fundamentalist (and boring) rejectionism, this seems like a breath of fresh air, and a viable way forward.

A good starting point to get into this space, besides the talks linked above, is this podcast with Leif Weatherby about How Is AI Affecting Culture?, and I made a Bluesky starter pack (work in progress) if you're into that sort of thing.

I know. I’m aware that this is a quite utopian 2nd order kitschy leap as a synthesis from the idea explosions through cognitive catalysts and their possible apocalyptic outcomes in the previous parts. I also know that curiosity killed the cat, and that, really, cats are not very curious.

But come on… Don’t be a ▭.

Haven’t checked in for a while. Lost me for good with the slop image. Can’t take your criticism seriously when served with fresh economic violence against rights holders. It’s like publishing an essay on racism with a blackface avatar. Thanks for the ”interpolatable archive” though. That’s useful. Bye.