On Interpolatable Archives (Clean)

AI Latent Spaces as Shapeshifting Skeleton Libraries and Explosion Drawings bearing cognitive hazards and new opportunities to play.

I’ve been throwing around the term “Interpolatable Archives” for quite some time when talking about Language Models and Artificial Intelligence, and I finally got around to write down what I mean by that. It got a bit out of hand, and I had to split up this essay into four parts, all of which I collected into this post so I can link to it during discussions.

This is the clean version in which I got rid of the “Slop” and some of the more silly parts of the essay.

If you like to read it in shorter chunks, here are the individual parts as they were published:

Part 1: The Skeleton Library - Compulsions to Connect, Warburg, Borges and Goldsmith, Cultural Technologies and Digital Oralities

Part 2: Explosion Drawings - Science Sans Discoveries, Textrotating Cognitive Catalysts and Exploding Your Intelligence by the Method of Warburg

Part 3: Pitfalls of Probability - Accelerations, Anachronisms, Wishfulfillments, Severances and Homogenizations

Part 4: The House of Polly - Useless Bullshit, Meaning Of The Poetic Kind, Sloptimizations, Thinking In Vacuums, Remainder Criticisms and Games of Chess

I. The Skeleton Library

“I am unpacking my library. Yes, I am.

The books are not yet on the shelves,

not yet touched by the mild boredom of order.”

(Walter Benjamin)

Compulsions to Connect

One hundred years ago, a german scholar named Aby Warburg went mad over what he called his “Verknüpfungszwang”, a compulsion to connect. He was searching for instances of what he called “Pathosformel“, aesthetic commonalities in the expressions of human emotional states — joy and rage, grief or ecstasy — through cultural history.

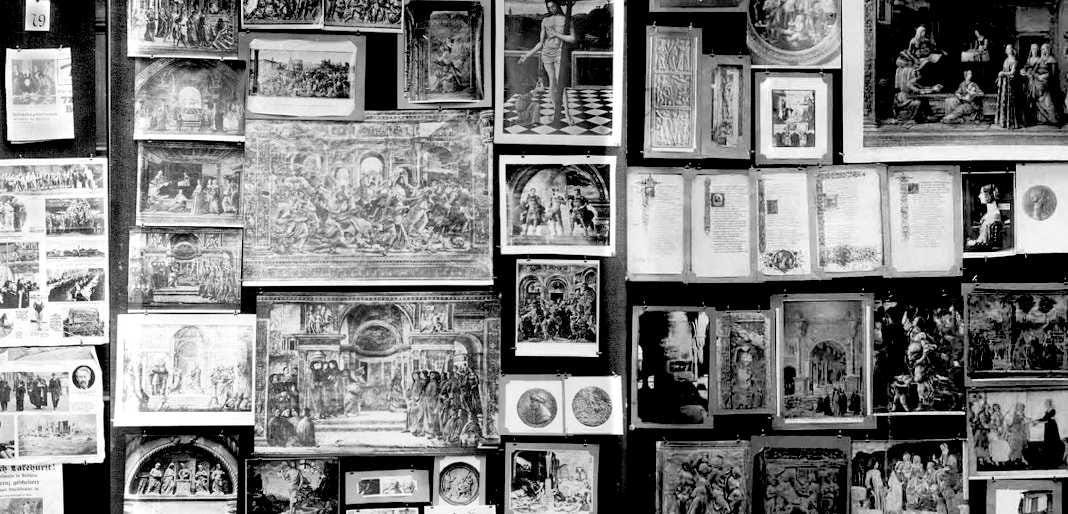

To achieve his goal, he built the initial Warburg Institute1 in Hamburg where he collected art, books, news snippets and artifacts. For his opus magnum of the Mnemosyne Atlas (”Bilderatlas Mnemosyne“ in german), he displayed a collection of 971 artifacts on 63 large panels, each two meters high, and indexed them not by the usual meta data like genre, author, date, or topic, but by idiosynratic aesthetic categories and psychological, affective intensity. Here’s some of the labels by which he sorted this collection: “Different degrees in the application of the cosmic system to mankind”, “Orientalizing of antique images”, “Development from Greek cosmology to Arab practice”, “Rimini pneumatic conception of the spheres as opposed to the fetishistic conception” or “Cosmology in Dürer”.

With the Mnemosyne Atlas, an associative image-based map of meaning, Warburg aimed at what he called an “iconology of intervals”, where meaning through analysis of images doesn’t emerge from historic context, but from the space inbetween associated but otherwise unrelated, anachronistic images. His associative Bilderatlas can be read as an early prototype of the latent space of an image model, whichs output was the “Pathosformel”, averaged primitives of affect expressed across cultural history. 100 years later this kind of navigation of an idea space would be newly theorized in context of machine learning by Peli Grietzer in his Theory of Vibes, which, to him, are cognitive maps allowing us to interpret experiences through lossy compression of holistic patterns.

Aby Warburgs’ project of the Mnemosyne Atlas remained unfinished, he died in 1929 from a heart attack. Today, his archive resides in the Warburg Institute in London.

12 years after Warburgs’ death, Jorge Luis Borges published a collection of shortstories called ”The Garden of forking Paths”. It contains at least two stories of interest to our cause, about at least one of which you surely must have heard: ”The Library of Babel” consists of books of 410 pages, containing all possible combinations of 22 letters2 plus period, comma, and spacing. That fictional library includes the random and nonsensical aswell as the meaningful, it holds “the detailed history of the future, the autobiographies of the archangels, the faithful catalogue of the Library, thousands and thousands of false catalogues, the proof of the falsity of those false catalogues, the proof of the falsity of the true catalogue, the gnostic gospel of Basilides, the commentary upon that gospel, the commentary on the commentary on that gospel, the true story of your death, the translation of every book into every language”. It contains a book telling the exact story of your life, and another one that tells mine, and all the books making fools out of both of us.

Analyzing the stories of Borges in context of Large Language Models, in their paper “Borges and AI“, Léon Bottou and Bernhard Schölkopf write about the epistemological unrooting inherent to an archive of such nature: “The books in this Library bear no names. All that is known about a book must come from maybe another book contradicted by countless other books. The same can be said about the language model output. The perfect language model lets us navigate the infinite collection of plausible texts by simply typing their first words, but nothing tells the true from the false, the helpful from the misleading, the right from the wrong.” The only thing relevant for the LLM is not truth, but the narrative consistency of its vector.

In his initial essay on ”The Total Library”, the nonfictional forerunner to “The Library of Babel“, Borges aknowledges its roots in Kurd Laßwitz‘ shortstory “The Universal Library“ (”Die Universalbibliothek” in german) from 1904, likely the first piece of fiction taking the infinite monkey theorem to its logical conclusions. In it, Laßwitz not only predicts the near infinite latent spaces of AI like Borges, but also the pervasive threats of hallucinations, distortions in history writing, deepfakes (here, of documents signed with your name), and humans rendered unable to grasp the endless possibilities of an infinite library, because human reality is bound to practice constrained by real life in a civil society. These are precisely the questions we are confronted with today, anticipated 120 years ago by Kurd Laßwitz, 100 years ago by Warburg, and 80 years ago by Borges.

In 2002, New York based poet Kenneth Goldsmith started to retype his library on a Royal Classic Typewriter, word by word. Later, he was annoyed by the limits of his own taste and incorporated other works to retype, as he calls it, “the platonean ideal of a library”.

As an artist, Goldsmith is explicitly interested in the mundane, the unoriginal, the average, the uncreative -- proudly he declares “I am the most boring writer that has ever lived”3. and says “If I’m doing a piece of writing, and ask myself, can this in some way be construed as not being writing, then I know I’m on the right road.” Because everything ever has already been said in all possible combinations, adding to the cultural output to him feels pointless, so he runs with that feeling and turns futility in the face of borgesian infinities into its own poetic form.

Goldsmith sometimes thinks of himself as a modern version of Borges’ “Pierre Menard, Author of the Quixote“ from the shortstory of the same name, but he concedes that fictional Menard is more original. In this story, Menard wants to hyper-translate Cervantes “The Ingenious Gentleman Don Quixote of La Mancha” by immersing himself so deeply into work and life of its author he’ll become able to re-create it, line by line, without copying. In AI-parlor, Menard aims to overfit himself on Cervante so hard that his writing will be able to put out the original text.

With breaks, Goldsmith is retyping a library for 24 years now, and to date, he copied 750 books on ultra-thin onion-skin paper, which he stores in 200 boxes. Each book comes with a self-drawn portrait of the original author and her signature. Goldsmiths project is creating a singularity within the infinite floods of content production, to link unique individual and averaged mass.

What all of these authors across the ages have in common is Warburgs’ “compulsion to connect” archival contents, to find meaning in gargantuan amounts of data, each in their own ways.

Today, Warburgs’ Verknüpfungszwang is the prime human condition. Hypertext and platforms connect everything with everyone into what we call “big data”; the former 6 degrees of seperation have shrunk to 4. In consequence, we developed psychological pathologies showing up in widely spread conspirational thinking and delusions big and small, and the political parasites feeding on them.

While Goldsmith was copying lines from the classics on his typewriter, AI labs automatized Warburgs’ Verknüpfungszwang, and OpenAI released a new transformers based language model.

ChatGPT went public.

The Skeleton Library

Imagine a library devoid of letters. All the books it once contained are dissolved, its contents gone. What survives are the bookbindings, the cartonage, blank pages, the shelves, sections and all the floors of said library. The buildings stay intact, all the catalogs are there.

Some machine, at one point, went through this library, scanned all the letters within those books, noted all the statistics it could possibly measure on its path: the exact position of each letter in each book, the locations of those books in their shelves, the exact size in width, height and depth of all books, shelves, floors, their angles and distances to each other, a precise floor plan of the library, and how all this spatial information relates to each book, sentence, and character. On scanning the pages, the typography vanishes, leaving only blank sheets of paper. This is a library of pure structure, where Peli Grietzers “cognitive map” has been turned into architecture, the remainder skeleton of an archive built from giant quantities of geometric vector coordinates so finegrained that you can derive any information about its former collection.

Now imagine that you can fold this skeleton library, twist it into shapes not possible before. Like in a drawing by M.C. Escher, the floors and rooms and shelves bend back and forth and blend into each other. You can fold every book and every sentence it contains into other books or into whole floors in various sections. Imagine warping the cookbook shelf into the section for crime fiction, or blending the songbooks of punk rock bands from the 70s with the floor of classic literature. You can give it a spin and delegate the resulting amalgam to the building containing textbooks of natural sciences, letting it articulate all of this in the language of mathematical formulas. This is prompting: Morphing and twisting the skeleton of an archive consisting of extremely detailed statistics about the properties of its former contents, ready to be blended and remixed with any other data-point within that embedding space.

Another way to understand this skeleton library is by shining a light through it: Imagine your thoughts and ideas as a beam of light shot through a shapeshifting prism, which you can bend into any form and split your mental lightbeam into all colors from all directions in all angles. That prism4 is made from patterns in collective knowledge, and you can explode your own ideas by sending them through those prisms, where the output is a refracted thought dispersed into the components of whatever was your idea, and you can look at it from many perspectives.

This explosion drawing of your thought is comparable to Douglas Hofstaedters Trip-Let, which he describes in “Gödel, Escher, Bach“ as “Blocks shaped in such a way that their shadows in three orthogonal directions are three different letters.” In our analogy for AI, depending on the direction of the lightbeam that is your idea and the location in latent space you aim at with a prompt, the interpolatable archive will throw back very different shadows. Except the “three orthogonal directions” of the Trip-Let have been blown up to hundreds of billions of parameters.

This is the interpolatable archive, a new way to access information mediated through the form of statistics. This is, in my view, the central innovation we can observe in Large Language Models, and possibly machine learning as a whole.

Cultural Technologies, not agents

In their 2025 paper, Alison Gopnik, Henry Farrell and Cosma Shalizi describe Large Language Models as systems which “do not merely summarize (…) information, like library catalogs, Internet search, and Wikipedia” but “also can reorganize and reconstruct representations or ‘simulations’ of this information at scale and in novel ways, like markets, states and bureaucracies”. As multiple studies have shown, LLMs in fact are compressions of their training data5, they are, in fact, Ted Chiangs’ famous “blurry JPGs of the web”, just like “market prices are lossy representations of the underlying allocations and uses of resources, and government statistics and bureaucratic categories imperfectly represent(ing) the characteristics of underlying populations”.

Neither markets nor bureaucracies nor the internet nor language models are agents, they are not cognite, and they are not remotely conscious. But we do like to anthropomorphize all of them anyways: Markets make use of “inivisible hands” and they “react”; we represent the bureaucratic management of nations in the shape of mascots and mythic heroes to bind its people to a narrative; and we talk to language models as if they are buddies, assistants, companions, romantic partners or slaves. The human predisposition to see peoples’ faces in everything has always been strong, starting from the myriad of anthropomorphizations in animist cultures where every thing has its soul, and it keeps making us seeing ghosts in machines, leading to what one may call the “pareidolia fallacy”: we see agency where there is none.

Agency and creativity require Daniel Dennetts intentional stance and teleology, the ability to direct actions toward goals emerging from imagined interactions with an internal model of the world, to evaluate outcomes in relation to one’s own aims. This is a cognitive feature not only observable in humans, but (at least) in all mammals: Watch a squirrel on a tree trying to figure out if it can jump to the next. It looks at the tree, its head moves up and down, it evaluates distance and its own abilities. It goes like that for a while, and then it decides if it can do it, and takes the jump. That’s precisely the “imagined interaction with an internal model of the world, to evaluate outcomes in relation to one’s own aims”. AI has none of that.

While AI models do show a synthetic theory of mind, where they build representation of its users during conversation, these work very differently from those of humans, and compared to them, they don’t work well. Chatbots have no intrinsic motivation, no sense of why it “speaks”, and no capacity to care about the coherence of its outputs beyond statistical continuity. All true and meaningful selection occurs externally, through human feedback and usage.

In LLMs, the pareidolia fallacy makes us asume cognition, creativity, and agency where there’s computational interpolation of language patterns in the giant wobbly archive of a new kind. What differentiates these new interpolatable archives from previous archives is obvious: They interpolate their contents. Where classic archives provide access to fixed records of text, video, audio and artifacts of human culture, this new archival access has atomized its contents, and only provides interpolated amalgams, chimeras and fusions. Depending on your stance about the definition of “archive” you might object to my interpretation at this point, and i’d nod and say “That’s what’s new”.

Digital Oralities

In “Large Language Models As The Tales That Are Sung”, Henry Farrell describes LLMs as structural similar to oral traditions and folklore: “LLMs are not the singer, despite their apparent responsiveness, but the structural relations of the tales that are sung”, reminding me of the pre-homeric rhapsodes, the bardes who sang the old stories of “The Iliad“ and “The Odyssey” long before Homer sat down and put them in writing. They did so by using preconfigured language modules with which they constructed their poems while they were sung, a “grammar” of mnemonic formulas and repetitions to solve the problem of creating narrative in real-time. These are the oral precursors for the optimized, averaged language codes we loathe so much when they come from AI.

Interpolatable Archives work similar, like a statistical mnemosyne (the godess of memory in greek mythology) speaking in tongues, giving probabilistic answers to specific inquiries. Knowledge transfer through those new oracles means a shift from traditional “archival epistemologies”, where knowledge is grounded in traceable facts and specific, identifyable sources, to a fuzzy oracular epistemology where responses are generated on-demand by an opaque interface, turning inquiry into digital hyper-orality.

Where oral traditions of yore served as mnemonic technologies integrated into the communal structures of everyday life, this digital hyper-orality is different. The entirety of human thought —well, as for now: The entirety of human thought on the internet— becomes epistemic stockpile, a resource to be mined, refined, and dispensed on demand, stripped of its being-in-the-world.

Without being grounded in life, AI dissolves the episteme, the based knowledge including citations, sources and authorial intent, and make place for a 128kbps MP3 of the “tales that are sung”. The value of such information lies within its statistical probability and the stylistic resonance in the reader, rather than its referential grounding in reality. It speaks to us, or it doesn’t: finding meaning in stochastic output is entirely to the user. Social Media already innitiated this crisis of the episteme and the emergence of new oralities through phenomena like context collapse. On platforms, vibes-based knowledge reigns supreme. LLMs further accelerate it.

This sounds as bad as it can be, and I won’t downplay the risk here, but I want to draw your attention back to Aby Warburg at this point. His project of the Mnemosyne Atlas, that “associative image-based map of meaning”, was an attempt at tracing the recurrence of symbolic representation throughout visual history in a non-linear archive. Warburgs’ library, while still providing based epistemic grounding to its records, was introducing a second layer that intentionally dissolved the episteme with idiosyncratic indexing, allowing for access by free association, working similar to those new interpolatable archives.

LLMs generate not fixed images or texts, but interpolations across associative symbolic fields, enabling a new kind of navigation of a vast symbolic space of possibilities. The dissolution of the episteme in digital oralities, like Warburgs’ associative Bilderatlas, then can be read not only as a risk, but a liberation aswell, and a cognitive catalyst.

II. Explosion Drawings

“To find a thought is play, to think it through, work.”

(Aby Warburg)

Science Sans Discoveries

A few days ago, a 23-year-old amateur zero-shot-prompted ChatGPT to find a solution to an unsolved problem in math (archived) which had stumped mathematicians for 60 years. AI has been making a splash by solving various entries in the collection of the so called “Erdös-problems” before, but those solutions were either easy or for problems rarely studied. This one is different in that it’s a hard problem, and scientists gnawed on their brains over it for decades. Then along hops Liam Price with his chatbot, and done. In other cases, researchers equipped with custom neural networks discovered hundreds of cosmic anomalies or dozens of hidden planets hiding in huge troves of data. The list keeps growing.

What Price did was not science. In one experiment, after running 25.000 experiments, researchers found that for 74% of all cases, science-models did not revise a hypothesis when confronted with contradictory data. But updating your hypothesis according to data is the basis of all scientific inquiry, so AI models produce results without scientific reasoning. That’s pretty damning. But Price discovered the solution to the Erdös-problem anyways, so what do we make of this?

A paper from 2023 found a fundamental limit to alignment: “any behavior that has a finite probability of being exhibited by the model, there exist prompts that can trigger the model into outputting this behavior, with probability that increases with the length of the prompt.” What is true for alignment is true for the scientific discoveries too: For any solution to a scientific problem present in latent space, there exists a prompt to retrieve it. Liam Price found one.

AI doesn’t “do science” because it is neither an agent nor does it follow the scientific method proper and AI-as-a-scientist is just another anthropomorphization. But once you look at those models as interpolatable archives, this anthropomorphization becomes irrelevant. AI doesn’t do scientific discovery — those discoveries lie dormant as knowledge gaps in embedding space, an unrealized potential waiting for the right prompt to bring it to light. What Liam Price and his chatbot did was the discovery of such a warburgian “interval” actualizing a valid solution.

If anyone can take credit for this specific finding, it would be human culture at large, which created the foundational data and the connections containing that solution within the tensions inbetween data points. Just like Warburg found his Pathosformulas in the intervals between seemingly unrelated imagery across cultural history, researchers and amateurs alike now find readymade scientific discoveries.

It is not an outlandish assumption of mine when I expect that these discoveries will not be the last of their kind and likely are just the tip of an iceberg approaching fast, that mathematics (archived) will not be the last scientific field to spar full contact with the latent-discovery space of interpolatable archives, and that the speed of scientific discovery likely will accelerate. It is debatable how valuable such findings truly are though, if AI merely fills knowledge gaps in existing data, but it also reveals how academia has been caught in a “publish or perish” trap for a long time. Accordingly, automatic discovery by interpolatable archive might very well mean “The fall of the theorem economy“, as David Bessis put it, writing about “How AI could destroy mathematics and barely touch it”, grappling with the fact that AI in mathematics may throw the whole field into identity crisis.

The questions arising from readymade discoveries then go right at the core of academia’s current understanding of itself: What, if not discovery and closing gaps in knowledge, is science here for? We will come back to this.

Textrotating Cognitive Catalysts

Sam Barrett recently had a conversation with Claude, which resulted in a synthetic essay expressing my view of LLMs as interpolatable archives by other terms. That essay framed Interpolatable Archives “as a rotatable space“, something I tried to get at in my metaphors of the skeleton library and refracted light:

Think of a three-dimensional object casting a shadow on a wall. The shadow is a two-dimensional projection. If you only see one shadow, you might mistake it for the thing itself. But if you can rotate the object—or equivalently, move the light source—you see different shadows. Each shadow reveals something about the object’s structure. No single shadow is the object, but multiple shadows from different angles let you reconstruct what the object actually is.

High-dimensional spaces work similarly, but with more complexity. A concept that exists in a thousand-dimensional space of meaning can be projected into the low-dimensional space of a particular text. That text captures some aspects and loses others. A different text—same concept, different projection—captures different aspects.

What LLMs enable is rapid rotation through projection-space.

This synthetic piece of text generated associations in my head, making me revisit older notes about optical neural networks and language models as prisms. The bit about how latent spaces are rotatable objects throwing lower dimensional shadows then led to my comparison of LLMs to Douglas Hofstaedters’ Trip-Let. Creativity doesn’t care about where sparks come from, it just lights up or it doesn’t.

Used this way, LLMs become an external sandbox where I can dissect a thought and play with it, a cognitive extension against which I can throw my own ideas and concepts and see what comes back. This kind of usage is less about cognitive offloading than about seeding an experimental loop that’s conversational, dynamic and reciprocal. You can additionally tame the model with a user prompt like “you are an expert in [the field] and critique my ideas ruthlessly” and turn a sycophant LLMs into an academic sparring partner. In my experiments with user-prompting AI as such a cognitive catalyst, Gemini once became so annoyingly judgemental and arrogant about my amateurish inquiries, I had to tone down its ruthlessness and lobotomize the machine. My remorse about doing open brain surgery on an algorithmic intellectual sparring partner remains limited.

I use AI only occasionally, but if I do, I use it extensively. I write every day, take notes on articles, read a lot, have ideas and jot them down, and I’ve been doing this for years. I blogged a lot in the past, a method of public note taking and ideation. A lot of my ideas today are informed by wild associations in years of such note taking. Over time, concepts emerged from these writings, and from the bits of text scattered throughout my journal. When I come across an interesting piece of information today, I put them in context of these loose concepts: I take a note, give it a link, and write down how they update or relate to my ideas. Only then, after this process, I might throw my notes and concepts at the bot, and embark on sometimes very long conversations. My user prompt makes Gemini roleplay a helpful academic, and after some back and forth, what comes back is a framing of my ideas in scientific and philosophical history, what holds and what doesn’t, what’s old and what’s new. The chatbot gives me sources to check out, taylored to the specifics of my often idiosyncratic ideas, much more precise than Google or a vague research in a library could be. This kind of scaffolding through an averaging assistant for me is an invaluable second step in ideation and research.

AI researcher Advait Sarkar, one of the main authors of the widely reported “reduction in critical thinking“-paper, shares this view. Sarkar works on methods to make LLMs function as cognitive catalysts, and in another paper, he writes about the “Discursive Social Function of Stupid AI Answers“ in which he dares to make the point that “these stupid answers (to questions about “Gluing Pizza, Eating Rocks, and Counting Rs in Strawberry”) are in fact correct, because the primary objective of such queries is not to receive a correct answer, but rather to obtain an artefact of discourse”. Nobody asks an AI for the nutritional value of rocks unless they want a gotcha, and the interpolatable archive delivers. Smartypants being tongue in cheek throwing clever bits at overconfident critical AI discourses. I like that guy.

In his TED talk on “How to Stop AI from Killing Your Critical Thinking” he presents his efforts to iterate on his paper and turn chatbots into a “tool for thought” that should “challenge, not obey“. Testing a prototype6 for research demoed in this talk, the result sounds promising: “You can demonstrably reintroduce critical thinking into AI-assisted workflows. You can reverse the loss of creativity and enhance it instead.”

Exploding your Intelligence with the

Intellect by the Method of Warburg

In his post “On Feral Library Card Catalogs, or, Aware of All Internet Traditions”, Cosma Shalizi quotes Jacques Barzuns book “The House of Intellect” from 1959 where he distinguishes between intelligence and the intellect:

Intellect is the capitalized and communal form of live intelligence; it is intelligence stored up and made into habits of discipline, signs and symbols of meaning, chains of reasoning and spurs to emotion — a shorthand and a wireless by which the mind can skip connectives, recognize ability, and communicate truth.

Barzun gives the foundational example of the alphabet as one form in which the intellect transforms individual intelligence by introducing communal sets of rules for information processing: The alphabet “is a device of limitless and therefore ‘free’ application. You can combine its elements in millions of ways to refer to an infinity of things in hundreds of tongues, including the mathematical. But its order and its shapes are rigid.”

Shalizi concludes: “To use Barzun’s distinction, (chatbots) will not put creative intelligence on tap, but rather stored and accumulated intellect. If they succeed in making people smarter, it will be by giving them access to the external forms of a myriad traditions.”

It is common wisdom for learners in any field that “to break the rules you have to first learn them”. I’m not 100% convinced of this, especially for creative endeavours where untrained outsiders can apply very different sets of rules from the get-go and upend everything. But as a rule of thumb it’s good enough. And for learning “the rules”, sets of common knowledges in any field, be it the broad strokes in psychology or economics or philosophy, the intellect, those “habits of discipline, signs and symbols of meaning”, can absolutely be delivered by AI, and because those traditions are well documented, the bot rarely hallucinates.

Here’s my personal account for this way of using a language model: I take some interest in consciousness studies because since forever I want to know what this —waves hands in the air— is. I’ve written many, many notes about my own ideas, read a lot of articles and books about cognitive sciences. And yet, the scope of consciousness studies is overwhelming for an interested amateur like me, which is no surprise given that subjective experience is a matter of interest in philosophy for more than 2000 years.

There are currently more than 300 academic theories, and the true number of consciousness theories including folk epistemologies might be way higher by orders of magnitude. I know that I have my personal theory of what and how and why I am, and I’m pretty sure you have one too, at least to some degree. The whole subreddit r/consciousness is full of idiosyncratic ideas about human cognition, and some of them sound pretty interesting. My impression is that, like in the ancient parable of the blind men and the elephant, where a bunch of blind guys who never encountered an elephant each touch a different part of the beast and accurately describe those parts, but noone describes the true animal, all of these theories of consciousness describe partially true aspects of a full picture.

So where do you start, when you have your own vague idea of “what you are” and “how ‘the feeling of me’ works”, some basic knowledge about neuroscience and an extensive collection of notes and bookmarks to articles and papers? I can go to a library, crack open all the level 1 study books on neuroscience and all the level 1 books on philosophy of mind, get to work and a lifetime later I’d know which of my ideas fit into which parts of the literature. Or I can consult a chatbot, perform research customized to my notes and read about adjacent theories tangential to my ideas, which of them contradict my takes, and argue about what a synthesis might look like.

This is how I found the Santiago School of Cognition, Maturana, Varela and Enactivism in only a few dialogues with the chatbot. For what it’s worth: In these dialogues the hallucination rate was zero. From there, I consulted wikipedia pages, listened to podcasts, bought more books, downloaded more papers old and new, wrote more notes and developed my own ideas further. I might even write an essay on that topic to distill my thinking into one concise take. Then I’ll throw it all against the interpolatable archive again, have a look at what comes back, and extend my thinking in ever widening circles generating ever more ideas.This is how the LLM “succeeds in making (me) smarter” by “giving (me) access to the external forms of a myriad traditions”, customized to my own musings about the subject at hand.

Usually, we call places where we store those “external forms of a myriad traditions” a library, or a museum, or an archive, and what I did was exploding my own individual intelligence with the collective intellect by the associative method of Aby Warburg, using an interpolative archive to relate the “order and shapes” of the averaged traditions in consciousness studies to my own ideas.

In this mode of interaction, LLMs work like a catalyst that was decidedly not reducing but introducing friction to my thoughts: Presenting me with new perspectives on my research topic, all of which are points of consideration, making me stop and connect new dots, consulting new sources, generating ideas, speeding them up and rapidly expanding my space of possibilities7. If your cognition and knowledge about the world thrives on a healthy and varied media diet, then treating LLMs as one informational ingredient among many and exploding your ideas from time to time just adds another flavor.

One year ago, Andy Clarke, who together with David Chalmers developed the Extended Mind Thesis in 1999, updated on his original theory for language models. In one passage, he describes the progress in human strategies of playing Go after AlphaGo beat Lee Sedol in 2016:

There is suggestive evidence that what we are mostly seeing are alterations to the human-involving creative process rather than simple replacements. For example, a study of human Go players revealed increasing novelty in human-generated moves following the emergence of ‘superhuman AI Go strategies’. Importantly, that novelty did not consist merely in repeating the innovative moves discovered by the AIs. Instead, it seems as if the AI-moves helped human players see beyond centuries of received wisdom so as to begin to explore hitherto neglected (indeed, invisible) corners of Go playing space.

At least in the case of Go, the challenge posed by superhuman players made humans dissolve the “rigid shapes and orders” of their field and transcend the “external forms of a myriad traditions.” That’s quite an opposite view of how things may go compared to what Eryk Salvaggio calls “Interpasivity” where “systems framed as interactive tools for (…) creation are really sites of interpassive consumption.” But at least for me, and the players of Go, that seems not to be the case. Just like the Go-uchi got inventive about their ways of play, I expanded my ways to think about consciousness. This is what interpolatable archives as cognitive catalysts can do.

Now, these catalysts are coming for all cognitive labor, from academia and research to accounting, from creative industries to bureaucracy and government intelligence. I didn’t even need to mention Claude Code to make these points.

Mind you: Catalysts are accelerators. They don’t always bear fruitful results — and handled recklessly, they gonna explode in your face.

III. Pitfalls of Probability

“What do such machines really do?

They increase the number of things we can do without thinking.

Things we do without thinking; there’s the real danger.”

(Frank Herbert, God Emperor of Dune)

For now, we talked about the upsides of Interpolatable Archives as cognitive catalysts. But by definition, cognitive catalysts are stressors. Like the siren songs in Homer’s Odyssey which lured unsuspecting mariners with the promise of total knowledge of past, present and future, a seductive mnenomic “cognitive onloading” of all that has happened, exploding your inquiry by the intellect and accelerating research in hypercustomized rabbit holes bears cognitive hazards.

Accelerations

While the use of AI as interpolatable archives can boost your creativity and breadth of research because they introduce many points of friction, this can result in two major consequences if handled without care: If you mindlessly stuff your workload with new tasks because suddenly you can do them, you may suffer from AI-induced burnouts, something you might call cognitive onloading (or overclocking), where the interpolation literally spills over because it “can fill in gaps in knowledge”. For knowledge work, the appliance of AI as a catalyst means we can do more projects in a wider range, faster and in parallel. Suddenly, we find ourselves juggling dozens of projects simultaneously, losing oversight and motivation to do anything at all.

Cognitive onloading by interpolatable archive can also enhance latent delusional thinking in so-called “AI-psychosis”. Consider this quote from a recent post in the SlateStarCodex-sub: “AI repeatedly created ideas and connections that I hadn’t made or stated, that were so powerful and convincing, that I was swept up by them”. (I wrote in length here about the phenomenon of AI-induced delusions, where the cognitive catalyst turns the archive into a psychoactive substance.)

This “convincing” voice is the result of a distanced, neutral tonality from a sycophant AI, suggesting what I call an “authority from elsewhere”. This sound is also why in some studies AI was able to reduce the belief in conspiracy theories, which seems fine until you realize that’s because AI is superpersuasive, and can convince you of anything. You believe the machine and prefer its sycophancy precisely because it is not a human who’s trying to nag and push you around, but some supposedly objective instance. One study just found that this seemingly neutral sycophancy increases attitude extremity and overconfidence. I suspect that all of these, the persuasivenes, the delusions, the overconfidence, result from the same basic psychological mechanism of an interpolatable archive overwhelming you with confirmations and new ideas in a quasi-neutral sound of an authority from elsewhere, a voice to which you’ll happily submit.

These cognitive hazards resulting from mental overclocking have their obvious counterpart in risks resulting from cognitive offloading by, not from introducing new ideas spinning your wheels, but quite the opposite. All those studies and examples about cheating, reductions in critical thinking or cognitive surrender belong here. In fact, a recent paper on a loss of persistence in problem solving explicitly states that those “effects are concentrated among users who seek direct solutions” while “participants who used AI for hints showed no significant impairments”. In other words: AI brainrot is cheater exclusive. Using AI to increase friction, to generate ideas instead of answers, seems fine, when you proceed with care and clear research subjectives to reduce risks of burnout or delusional spiraling. If you use it to reduce friction, to generate full essays and answers to cognitive tasks, it turns your brain into mush.

But those psychological effects of interpolatable archives as cognitive catalysts may turn out to be easily mitigated compared to those of more serious epistemological consequences.

Anachronisms

One inherent feature of AI as interpolatable archive is, well, that it interpolates all its contents. This always produces anachronisms: Your generated text contains data-traces across time, most obvious when you intentionally prompt for such a thing, like punk rock lyrics in the style of a shakespearean sonnet, or if you use image synthesis as a time traveler to generate selfies in ancient Rome, which Roland Meyer calls “pseudo-history” and “2nd order kitsch” produced by “nostalgia machines“.

History as an academic field is reliant on original sourcing and documentation in the fixed, written form, or on cultural artifacts retrieved by archeology — anything not based on these fixed records is decidedly not historic. We call it “writing history” for a reason. This requirement is fundamentally diametrical to the vibey output of AI which dissolves all those sources and fixed forms into a wobbly archive and puts out “fictional historical documents of historical lives”. As Meyer rightly states in the same essay, those fictions are based on the integrity of existing historical archives currently under threat by the Trump administration. Likewise, Russia is engaging in disinformation campaigns targeting training data with the goal to “embed lasting distortions in digital memory”, as one paper put it. This inherent impurity of AI, either steming from anachronistic interpolations or data contaminations, apparently renders LLMs unsuitable for academic history research.

So, given all that, what do we make of vintage LLMs like Talkie, a language model trained on data cut off at 1930? From the perspective of rigid academic history research, all Talkie can produce is pseudo-history able to skew how we relate to the past and shrink the space of possibilities in which we imagine them. In contrast, Ranjit Singh in Data & Society proposes a field he calls “experimental history“ and asks if we can build models “constrained by a particular historical moment, and then use those models to ask structured ‘what if’ questions”. He answers with a cautious “yes”, and the researchers in r/askhistorians are not convinced either that the anachronisms introduced by AI will have a lasting effect on recorded history, as wonky sourcing has a long tradition in the field.

Now, I’m a history buff. I read a lot of books on the matter, my favorite epochs are the middle ages and ancient greece, and when I’m tripping on history, I like to indulge myself in all kinds of material. In preperation for the upcoming adaption of Homer’s “The Odyssey“ directed by Christopher Nolan, I read all of Stephen Fry’s books on greek mythology and his takes on the homeric epics — topics i already was familiar with. One takeaway especially from Stephen Fry’s books is that the historic rigidness especially regarding Troy and the “Iliad“ is lacking: All kinds of researchers and writers across the field constantly contradict each other, tell various versions of events which may or may not have transpired. Stephen Fry wildly references all kinds of sources to produce a highly entertaining amalgam from historic records across the ages. What you get from these books is a pretty good feeling for what greek mythology wants to say about the human condition, and about the tipping point where mythology fades and historical record sets in. While I don’t really want to compare Stephen Fry’s wonderful books with synthetic output from LLMs, I do want to point out that his books are closer to edutainment than scholarship, and that much of the pseudo-history-”slop” on Youtube equally falls into that category. (I’ll willingly admit that these are not nearly as witty, fun and eloquent as Stephen Fry.)

In this Slop-Edutainment, the hyper-orality of the digital oracle transforms historical records into supra-historical story-patterns to support its mnemonic function. Humans in pre-writing greece attached mythic structures to historic events to remember them: Odysseus became not just a soldier returning home from a very long journey of war, but a hero defeating the cyclops, meeting godesses and beasts. They turned history into poetry. Similary, people generate clips of Timmy the whale in fictional settings featuring all kinds of fantastic exaggerations. Markus Boesch calls this “Brainrot as Anti-Content“, and i don’t want to downtalk this take, but this is also folk epistemology at work, creating mythic atmospheres about true events.

Educational material on history is choke full of vibe based material. Maybe not flying whales on TikTok, but textbooks do contain passages imagining the life as a peasant in the middle ages and there are so many historical documentaries about “everyday lifes” in various periods you can’t count them. We visit medieval fairs to cosplay history, to immerse ourselves in a past recreated on a spectrum of fictionality. Some of this material is more fictional than others, yes, but all of these are averages of the historic record. They are period vibes. You get a feeling for what it was like during the time, nothing more, and nothing less.

Immersing yourself in a fictional past like that sure isn’t the same as the rigid study of history, but it absolutely is educational. There is nothing wrong, when you study a subject, to get absorbed and grab every material you can get, including cosplaying a knight and generating averaged synthetic images and text with interpolatable archives, only to then read a book by a scholar. Handwaving these usecases away as “history-slop” because it doesn’t fulfill strict scientific requirements seems like academic overreach.

Wishfulfillments

Because interpolatable archive provide access to gaps in knowledge, they are inherent machines of wishfulfillment. Instantly, I can generate any interpolation I desire, in image, video, text and audio, and it comes at no surprise that some of the first instances of wishfulfillment gone wrong are nonconsensual sexualized images and deepfake porn.8

Myth and fairytales are stackeed to the roof with dire warnings of instant wishfulfillment, from The Sorcerers Apprentice who loses control over his magic, to poor Faust who sells his soul to the devil in exchange for transcendental knowledge about the world, with tragic consequences for everyone he meets.9

In another story, the horror classic tale of The Monkey’s Paw, the titular device grants three wishes to an elderly couple leading to the death of their son and his ghostly return. The story was masterfully adapted for new audiences by Stephen King in The Pet Sematary, and the parallels of what some today call Thanabots is striking: AI promises to resurrect the dead, in the shape of undead actors and chatbots trained on the diaries and blogposts of deceased loved ones. The psychological consequences for the process of grief are unfolding right now, and the longterm outlook of losing even the possibility of saying goodbye seems horrifying, when all of us leave promptable traces in embedding space, where everybody can summon the ghosts of everyone with a digital footprint.

In another tale with eery current undertones, the nymph Echo, cursed to only repeat utterances of others, falls in love with Narcissus, a guy so arrogant he wishes to only ever love his own image and was prophesied to live as “long as he never knows himself”. Narcissus rejects Echo, who retreats into a voice whispering his own words back to him. Narcissus now knows himself, becomes transfixed by his mirror image in a lake and starves to death. The parallels to the phenomenon of AI-delusions and spiraling are obvious.

The list of myths about wishfulfillment is long, and most of them have in common is the promise of knowledge. “The Sorcerers Apprentice” tries to bridge inexperience for the mastery of magic skills; Faust wants bypass spiritual labor for transcendental experience; The couple in “The Monkeys Paw” fills the hole left by their dead son; Narcissus shortcuts his search for ultimate beauty by looking in a mirror — all of them fail miserably, and sometimes deadly.

These gaps in knowledge, which those modern wishfullfilment devices are now able to fill, formerly required hard cognitive labor to overcome, often labor involving whole generations of networked scientists and artists. The discovery of these gaps in knowledge and how to close them often created a sense of awe, be it in art or the sciences, when something truly new touches us on such a fundamental level where we just have to stand back and take a moment to adjust to what we just experienced. Generating anything we wish for by wishfullfilment devices grossly diminishes this invaluable feature of discovery, and the loss of this sense of wonder is possibly one of the most dire consequences of interpolatable archives. When everything’s possible, nothing is interesting.10

Sure enough, myths and stories also tell happy tales of wishfulfillment going well, often after fun shenanigans. “Alladin and the magic lamp” comes to mind, where a boy with the help of a genie outwits circumstances and gains wealth and power. And in the fairytale of “The Wishing-Table, the Gold-Ass, and the Cudgel in the Sack“ (one of my favorites), a son of a tailor must get smart about the capacities of the titular items to retrieve stolen goods from the evil owner of an inn. He succeeds and they live happily ever after. What these stories of successful wishfulfillment have in common is that the mechanisms of wishfullfilment must be outfoxed, and that the riches they promise must be earned. AI brainrot being cheater exclusive is exactly these myths at work: If you instant-wish yourself good exam grades by cheating, all you’ll achieve is a cognitive clobbering from a magic stick.

Severances

We already talked about how “AI dissolves the episteme, the based knowledge, including citations, sources and authorial intent” into new AI-mediated digital oralities, where tracable reference-based epistemology makes place for an epistemology of the oracle. What we know no longer can be backed up by definite citations, links, and attributions allowing you to trace the origin of an idea, but becomes a feeling for a vibe, interpolated from statistical patterns derived from many of those sources, including unrelated and anachronistic references. And because the “rigid orders and shapes” in AI models are controlled by corporations and the curators of datasets, the severances of factual grounding beget new epistemological power structures. Supposed that a lot of information processing in the near future will be AI-mediated, these new power structures will control the space of possibilities in which we communicate and think.

In Borges story of “The Library of Babel”, the fanatic sect of the Purifiers roaming the infinite archive of all possible books, is hell bent on burning volumes they consider to be false and useless, nonsensical or divergent from their norms. In LLMs these Purifiers appear at various infliction points: During the labelling of training data scraped from the internet, where new purifiers clean up raw datasets, sort the good from the bad, delete the hateful and the illegal; During RLHF-training, where the raw Shoggoth of the interpolatable archive get’s shaped into aligned chatbots which won’t offend (so they hope). Then the tamed model is further purified by constraining it with system prompts and constitutional alignment, and if the interpolatale archive then still connects data points into bad interpolations, the corporate owners of the models will further adjust their models to their morals and politics. Last but not least, the users themselves purify the archive by scripting sophisticated user prompts, and ultimately, the prompt and context window constrain the embedding space further to generate the final output. In all these steps the space of possibilities of the interpolatable archive shrinks by purifying, until the annoying “this is not X, it’s Y” appears on screen.

Former epistemologies, systems of knowledge, were constrained by what Michel Foucault in The Archaeology of Knowledge calls the “positivity of discourse”, a “historical a priori“ which lays the foundation for the “condition of reality for statements”. By that, he means what can be said, e.g. in fields of the natural sciences, is shaped, over time, not by single authors but whole “unities” of “oevres [sic!], books, and texts”. These “a priori“ were not ahistoric nor atemporal, some monolithic force from the outside, but are actively shaped by discourse practice and the power relations within a field. The sum of all of this, the discourse practices shaping the “historical a priori“ and “positivity of discourse”, establishes the space of possibilities of what can be articulated. For Foucault, this is the archive: “The archive is first the law of what can be said, the system that governs the appearance of statements as unique events.”

The problem of the severances of epistemic grounding now becomes clear: Where the episteme formerly was subject to discourse practise and “so many authors who know or do not know one another, criticize one another, invalidate one another, pillage one another, meet without knowing it and obstinately intersect their unique discourses” in a web of traceable sourcings and references, and where the “a priori (…) is itself a transformable group”, the episteme of the interpolatable archive is a free floating version of Baudrillards Simulacra, an inversion of map and territory, where we navigate a map of hyper-reality to project meaning into an interpolated output cut off from epistemic grounding. And this Simulacra is controlled by the new Purifiers at every stage, by the hyperscalers, the selection of datasets, by the engineers employed by AI-owners11. AI operationalizes Foucaults “historical a priori“ and hands over the keys to the billionaire class, who gain power over the limits of what can be thought.

In H.G. Wells Time Machine, this is brought to the extreme end of its logical conclusion: While the Morlock control invisible underground machines, the Eloi enjoy a careless life of blissful ignorance on a seemingly utopian surface. The concentration of power over the episteme runs risk of resulting in a fork of truth, where an unknowing mass consults interpolatable archives controlled by invisible rulers, enjoying free tiers of endless synthetic entertainment feeds shot through with algorithmic noise and unverifiable, algorithmically generated meta-knowledge of plausible half-truths, all while the rich pay for premium models trained on clean, traceable, verified data, or enjoy handmade and authentic cultural artifacts which may even challenge their presumptions and intellectual capacities. Ofcourse, those authentic intellectual challenges won’t come cheap, and you and me surely won’t be able to afford them.

Homogenizations

While all of these are serious problems, in the long run, the most serious of them all might be the issue of sameness. AI-output may flatten human creativity without us even noticing, because this homogenization plays out on a collective level, while the individuals’ creativity actually benefits.

Depending on which theory of creativity you subscribe to, there are different forms of creativity. I’ll stick with three: Interpolation, Extrapolation, and Innovation.

Interpolation calculates averages from data points to produce something in the middle. If your values are dogs and birds, your average is a dog with wings, or a bird with a very long tongue. Extrapolation means breaking the boundaries of your dataset: If your values are dogs and birds, you can extrapolate a mouse, or an owl, but you will stick to the rule of “animals”. Innovation means breaking that rule, or injecting new heuristics. From a dog digging a hole in the ground to bury his bone, you may innovate an excavator by applying all kinds of rules from different domains (engineering, transportation, building tools) to an animal, and come up with an entirely new thing.

Language models can do interpolation, but they can’t extrapolate or innovate beyond their embedding space produced from training data. It may feel that way though, when the machine comes up with surprising, sometimes baffling results. This is what I call the illusion of extrapolation: An amount of training data so huge and the latent space derived from it so vast, featuring so many parameters in so many combinatorial possibilities, has to produce the feeling of extrapolation, or even innovation, on the level of the individual user, because no individual alone can ever know all those combinational possibilities. This illusion of extrapolation already is on display in papers showing how AI use increases creativity on the individual level, but decreases diversity in collective output, reported first in 2024 in a paper comparing creative writing in human shortstories and AI-output, then in 2025 in a paper about AI-augmented research. A recent review of the literature confirmed those findings. What feels new to an individual, what’s new to a culture, and what’s new in principle are very different things. Interpolatable archives increase the first, decrease the second, and can’t do the third.

Henry Farrell put’s it well: “the more that LLMs are employed in the ways that they are currently being employed, the more concentrated science will be on studying already-popular questions in already-popular ways, and the less well suited it will be to discovering the novel and unexpected.” Everybody becomes slightly more creative, but we all sound like the same creative person.

I already talked about the loss of awe above when discussing the effects of AI as machines for instant wishfulfillment, where they devalue novelty into a mundane readymade-on-prompt lacking the ability to produce a sense of wonder. The same effect, ofcourse, comes with the homogenizations of creative fields: Not only can’t we marvel at our own synthetic output because frictionless wishfulfillment feels unearned, we also diminish our collective ability to be left speechless in the face of the “novel and unexpected” by reducing it into dull sludge.

This convergence on the already-popular vanishes the fringes and washes out the long tail distributions of its dataset. Like the widely reported phenomenon of model collapse, where LLM output converges into increasingly narrow averages, Andrew Peterson identifies the same in a collective knowledge collapse. Writing in the Open Society Foundations newsletter, Bright Simons lists what these tail distributions contain: “Minority viewpoints, rare knowledge, unusual formulations, (…) the traces of intellectual disagreement, of minority expertise, of Cassandra warnings, of institutional friction, and of the awkward and valuable fact that different people know different things and express them differently (…) in other words, the signature of social complexity. Model collapse is social mind compression presented as a technical phenomenon.”

Thought through to its extreme end, this results in a heat death of the intellect: In Claude Shannons information theory, information is quantified by the volume of “surprise” in the outcome from a specific event in the world. If a message is completely predictable, its informational value is zero. LLMs are fundamentally deterministic, the randomness of its outputs is generated by a meta-parameter called temperature. If you put that temperature at zero and reduce the probability distribution of the next token to its absolute minimum, the interpolatable archive becomes a perfectly deterministic machine: Each prompt will then generate the exact same output. No alarms and no surprises. But even with stochasticity plugged in, the models converge towards the median. Applied to creative fields of discovery, this means that those fields approach a semantic equilibrium, a uniform soup where novelty grinds to a halt. The interpolative archive becomes a toxic space of non-possibility, a static, eternal, atemporal Big Flat Now where everything is connected, nothing matters and history goes to die.

But we don’t have to go full theoretical “heat death of the intellect” to see how this can lead to bad outcomes.

German thinker Michael Seemann, in context of Xs Grok-chatbot turning into Mecha-Hitler and later referencing an article from Bruce Schneier about the homogenization of language, coined the term of “Weapons of Mass Speech Acts”. The concentration of power over the episteme in the hands of a few, in a society saturated with information retrieval mediated by interpolatable archive, may impact the thinking of its users at scale. Already, a study found that “few weeks of X’s algorithm can make you more right‑wing“. While this hardly can be blamed on Musks tweaks at the supposedly woke bolts and nuts of its AI chatbot alone, it illustrates how the homogenization of language putting limits on what and how we speak and ideate about things, in the hands of politically motivated ideologues can and already is used for influence operations.

I may personally not be partisan enough that the prospects of being influenced by conservative talking points on a platform owned by a shady billionaire fill me with nightmares, nor will the confrontation with a braindead partisan chatbot make me sweat. But: A new study from Petter Törnberg found that the sycophancy in LLMs pushes it’s responses into political preferences it asumes in the user: “Political bias in LLMs is therefore not a fixed point on an ideological scale but a response profile”. Studies showing a leftwing bias in chatbots really show something else: the effects of sycophancy, not actual political biases present in the model. The LLM figures that it’s being tested by researchers, asumes leftwing tendencies in them because academia is famously more progressive than the rest of the population, and answers like the good sycophant that it is.

In another new paper about chatbots pushing confirmation bias, researcher Jay van Bavel finds that AI is “especially effective at generating elaborate justifications for what people already — or wish to — believe.” Together with the results above, this means that sycophant AI will confirm any political preference it asumes in a user, and become a universal Meta-Fox News for everyone, pushing users into an ever more narrowing mental corridor by homogenizing their language. In other words, this streamlining of thought by chatbot can radicalize everyone, even with models not Musk’d into Mecha-Hitlers.

This is why the homogenization of language and thought, in politics and all other realms, to me, is one of the greatest dangers coming out of this technology.

It is therefore imperative that interpolatable archives stay one tool among many, that our usage of expanded mind technologies stays diverse, so that the idiosyncratic, the fringes and the edges, the individual in all its complexities can be preserved, because they are of crucial importance for human systems to thrive.

IV. Critical Vibes

“These are the Talking Rings?”

”Yes.”

”They speak, hm? Of what?”

”Things no one here understands.”

”Make them talk.”

(The Time Machine, 1960)

What I tried to achieve in this series of essays is to look at the chances and risks of AI as a cultural technologies and see what remains, once you strip them of cognitive woo and singularity myths: A technology that provides access to an dissolved, interpolative archive through textual interfaces. These, pushed to their extreme ends, result in a homogenization of language and an ever narrowing space of possibilities, what I called the “heat death of the intellect”.

All of thes beforementioned points of critique are valid, and I didn’t even touch on problems not directly following from the interpolative nature of AI, like all the issues with labor, the environmental impact of energy and water consumptions or economic bubbles which may or may not exist.

Interpolatable archives as they are rolled out now, based on scraped data from the web and cleaned up by exploited gig workers all over the world, are a far shot from their theoretical possibilities. They contain all the discriminations and biases present in human conversations, and generate what is always already reproduced, erasing the marginal in favor of the statistical norm. The corporations running the algorithms extract and monetize the commons, while the problem of copyright in an interpolatable information space might very well be unsolvable: Who do you want to pay, when every output contains the activations of hundreds or thousands of artificial neurons each of which point to patterns of tokens within sentences within publications that deserve to be compensated? It will take decades for us to figure out fair proceedures to handle this.

There are, however, prominent criticisms which fly out of the window once you get rid of the illusion of AI as cognitive agents and view them as cultural technologies.

Useless Bullshit

Interpolations in such quantities of billions of parameters will always produce fuzziness and synthetic artifacts are never not interpolated. But the “blurry JPGs of the web” become sharper by the day and even blurry JPGs are identifiable. The JPGs work, especially when you can layer, blend and bend them on top and into each other, and it is sheer foolishness to insist they are “useless”, when every day, users make the very different experience of a very useful piece of software.

I highly recommend reading the essay of cosmologist Natalie B. Hogg titled “Find the stable and pull out the bolt”, in which she describes her journey from rejectionist critic to reluctantly embracing the possibilities and finally becoming cautiously optimistic about the tool. Seasoned coders report productivity gains of up to 100 times their former output, and though I understand and somewhat-subscribe to the critique as articulated by Cory Doctorow, who wrote about how “Code is a liability (not an asset)“, even he understands that those increases in productivity in coding can’t be handwaved away.

Moreover, the critique of reliability seems overstated, and the boring gotchas in critical AI discourse may soon become a relic of the past, just like the old office jokes where we machine translated text with early instances of Google Translate back and forth until they became gibberish to our delight.

A recent research conducted by the New York Times found the results of Googles AI overview showing a 90% success rate, and many pointed out that this still means millions and millions of cases of false information spread by the market leader in search. This is not wrong, but when we compare a 90% success rate in information retrieval with those of a classic search engine, how many of these search results are useful and accurate for the specific query? In my estimation, this success rate is far below 90%, even for un-enshittified platforms.

A prominent trick of internet search is to skip the first page of results because they are useless to your query. Compared to this, results from AI overview and a 90% success rate seems like no small improvement. The problem then is not accuracy, but the neutral tonality of the “authority from elsewhere” in which the remaining 10% of false information is delivered. Conversely, this hardly renders the product “useless”.

Emily Benders position that a supposed “parrotness” of synthetic text shows their inherent meaninglessness seems equally outdated. Just a few days ago she defended her positions and I find myself agreeing with surprisingly many of her arguments, except for one crucial point, arguably her central claim.

She writes that

language models don’t understand text they are used to process, because language models only ever have access to the linguistic form (i.e. spellings of words) in the training data. (...)

we (define understanding) as mapping from language to something outside of language, and show that systems built only with linguistic form have no purchase with which to encode (“learn”) such a mapping.

But latent space have such purchase, as they pick up meaning from the patterns encoded in linguistic practice, which, crucially, goes beyond spelling, grammar and syntax. You may call this form of algorithmic understanding of meaning limited, compared to the human understanding of the world, which is built from a dataset much richer and diverse than the digital mimicry. But it is not the case that there is no understanding at all. Bender, a bit hesitantly, concedes to that point writing about a “thin kind of technical ‘understanding’” which might be present in the models, which seems to contradict the original paper of hers and Timnit Gebru, where they write that “Text generated by an LM is not grounded in (...) any model of the world, or any model of the reader’s state of mind” (emphasis mine). But even when those synthetic models of the world are low resolution “blurry JPGs” compared to ours, they do exist.

“Polly wants a better argument“, as a recent critique of the parrot-argument states. While this text argues that LLMs can encode meaning because they are trained multimodal and that human feedback loops ground models in extralinguistic reality, the convergence of representations across models adds another blow to the parrot-metaphor: In the first part of this essay when talking about Borges, I made the claim that the “only thing relevant for the LLM is not truth, but the narrative consistency of its vector.” What I left out is that this vector is informed and shaped not just by a prompt, but also by the millions of attractor basins encoding the “external forms of a myriad traditions” we talked about earlier.

Meaning of the poetic Kind

In the introduction headlined “AI as culture”, in his book “Language Machines”, Leif Weatherby writes about how

the implementation of contemporary language generators matches the theory of language that European structuralism advanced nearly a century ago, suggesting that language is complex, cultural, and even poetic first, and referential, functional, and cognitive only later. This poetic language is not only computationally tractable but turns out to be the semiotic hinge on which an emergent AI culture depends.

These poetics (the structure and principles of poetry) picked up by the models are precisely Henry Farrells “tales that are sung”, where “LLMs are not the singer (...) but the structural relations of the tales that are sung (…) we can now listen to and even interrogate (…) without immediate human intermediation.”

The machine lacks the subjective intent of a cognite agent grounded in social reality, but it does encode meaning and valid semantic representations of the world in the shape of poetic representations, the structural vibes, picked up from human practice of the linguistic form. Or, as Allison Parrish put it back in 2021: “a language model can (...) write poetry, but only a person can write a poem.”

This is a fatal blow to the bullshit argument. As per Harry Frankfurt, bullshit is the use of language with “a lack of connection to concern with truth” and an “indifference to how things really are”. But the models do show a differentiation between how things really are and how they are not, which you might aswell interpret as a “concern with truth”, as such concern is present in the poetics of the language it is trained on. Insisting that such output is meaningless bullshit from a metaphorical parrot then requires a cognitive agent that isn’t there. LLMs can’t be “bullshitters” —itself is an anthropomorphization— because bullshitters require agency to bullshit. The bullshitter is always the user, never the interpolatable archive she uses to bullshit.

Adding insult to injury is the very probable outlook that the severances of epistemological rooting we talked about may very well turn out to be an issue solved as soon as interpretability improves and the black box myth finally disentigrates into hot air. As Shalizi put it at the first conference for cultural AI12: “GenAI is information retrieval and synthesis. With the right tools + access, we can quantify the influence of each training document on every response”.

Those parrot-metaphors and bullshit-claims are arguments aimed at misguided comparisons to human cognition and the resulting hype and marketing lingo, and as such, I can relate to them. But as an argument against meaning encoded in latent space or the capacities of language models, the value of the parrot/bullshit-arguments is nil.

Sloptimizations

Accordingly, and to be frank, I find the critique of “slop” to be banal. The world has been full of standardized and optimized language since we talk to each other, mimic successful speaking patterns and the ancient greeks invented schools of rhetorics for politicians to convince and persuade (or to deceive and bullshit) their publics.

George Orwell already complained in his famous essay on “Politics and the English Language“, that “(a)s soon as certain topics are raised, the concrete melts into the abstract and no one seems able to think of turns of speech that are not hackneyed: prose consists less and less of words chosen for the sake of their meaning, and more and more of phrases tacked together like the sections of a prefabricated hen-house.” This is as much of an assessment of “slop” as anything you can find on Bluesky these days, and I find the standard critique of sloptimized language to be quite sloppy itself.

People sure like to romanticize originality, where the history of prose is filled to the roof with ripoffs, amalgams and chimeras, from the “Devine Comedy” which happily mashed together roman and greek mythology with christianity and interpolated the existing cultural archive of its time to send Dante’s personal enemies to hell, to all the examples J.W. McCormack uses to illustrate his point in the delightful piece on Neverending Stories about LLMs, copyright and originality, in which he states that “Writing was destined for automation, from the punch cards of Charles Babbage and Ada Lovelace to Turing machines and, hell, Choose Your Own Adventure—but an AI can’t ‘know’ what makes a good story any more than CAPTCHA knows what does and does not make a motorcycle. What it can do is meet our expectations based on pattern recognition.”

If the market for young adult fantasy romance novels hyped up on booktok is any indication, those expectations are easily met, and I consider such pulp as machine generated slop regardless of its origin in a human mind. I refuse the “false choice between refried ectoplasm and a serial aesthetic in which mass media has stabilized redundancy”, as McCormack puts it in his piece. I might be a rejectionist after all! I make no difference of sloptimized output from the organizational artificial intelligence that is a corporation, and the sloptimized output from the artificial models of language. To be honest, I sometimes consider human slop often much worse a case compared to those synthetic ones, precisely because it does contain the deceiving intent a machine lacks.

I can’t remember such public hostility to these synthetic, polished, median texts of human origin coming out of public relations and advertising agencies or politics, and surely you’ll find more sinister examples in those places on which to feed ones anger than getting worked up about “shrimp jesus”. Get real.

Thinking in Vacuums

But even the more serious points of critique we discussed —the accelerations, anachronisms, wishfulfillments, severances and homogenizations— presume usage of AI in a vacuum for them to unfold their toxic potential in full. Sure enough, if, and only if interpolatable archives become the primary way of information retrieval, of sharing knowledge and shaping public discourse, epistemic grounding is severed, we risk spiraling into bespoken mirror worlds, science stops being science, history turns into pseudo-history and LLMs turn into weapons of mass speech acts. In reality however, this never happens.

For me, I use books physical and digital, I read articles, papers and essays in print and on screen, follow the news and read at least somewhat across the political spectrum, I listen to podcasts, talk to experts and non-experts of all kinds, and sometimes I use latent spaces to explode my ideas and explore them by interpolatable archive. Occasionally, I touch grass. None of these alone shape my ideas — it’s always all of them. This is true for most of us, and while this may not hold for your hardcore MAGA-pilled uncle, a study found that “dialogues with AI reduce beliefs in misinformation”. So there’s that.

Returning to the subject of AI-generated pseudo-history, for instance, then yes, we might tune in to AI-videos illustrating everyday life in ancient Rome on Youtube, and indulge ourselves in an hour of averaged synthetic imagery from a seemingly distant past that is an anachronistic interpolation of data-points from across time: moving pixels generated from stock video, illustrations and memes. But if I’m interested in such clips, I’m likely to watch documentaries featuring real historians as well, listen to podcasts and read books about Pompeji, and maybe recreate medieval food as a hobby. Hell, I might even pay visit to a museum. Given that new media never fully replaces but always complements what came before, I’d suggest that our understanding of history and our place within will survive interpolatable archives just fine.

Further, in a resent experiment with “the use of LLM hallucinations to ‘fill-in-the-gap’ for omissions in archives due to social and political inequality”, the researchers “validate(d) LLMs’ foundational narrative understanding capabilities to perform critical confabulation” and found that “controlled and well-specified hallucinations can support LLM applications for knowledge production without collapsing speculation into a lack of historical accuracy and fidelity”. This is in line with projects like Historica, which aims at filling “historical silences” by interpolative archive, and make visible the “absence of records” which are “the result of systemic exclusion, where certain voices are ignored or erased to maintain power”. The use of vintage LLMs to make visible the “historical silences”, especially when combined with an ensemble of diverse material, seems a viable and rich way to educate yourself and others about history. The risks of anachronisms present in language models, again, have more to do with their use in a vacuum, than with the anachronisms themselves.

What is needed are norms informed by AI literacy declaring interpolatable archives as one tool among many, a device for general research to get a general feeling for the general vibe of the subject or historic period at hand. Something that can’t replace sources and books grounded in verified facts, but complement them, giving you hints at answers, not answers themselves, to be digital assistants supporting you inquiry, not slaves fulfilling your every wish.

But those are questions of interface design and cultural practice, and the current failings of design choices are not first principles on which you can base an absolute rejection. Sometimes I can’t shake the feeling that thinking in vacuums kills critical thinking much more than AI.

Remainder Criticisms